How Accurate Should a Precision Instrument Be?

In modern industry, the value of a Precision Instrument lies not only in accuracy, but in how well it supports Industrial Control, Process Optimization, and Energy Efficiency. From Sustainable Monitoring to Emission Reduction, the right level of precision helps companies balance performance, compliance, and cost. This article explores how accuracy standards influence Green Technology, Environmental Protection, and the selection of tools such as an Efficient Gas Analyzer.

What Does “Accurate Enough” Mean in Precision Instrument Selection?

A precision instrument does not need to be the most accurate device available to be the right choice. In industrial manufacturing, energy systems, laboratory analysis, environmental monitoring, and automation control, the practical question is whether the instrument delivers measurement quality that matches the process risk, control target, and compliance requirement. For many buyers, this is where confusion begins: they compare headline accuracy values without checking operating range, repeatability, drift, response time, and calibration conditions.

Accuracy should always be tied to the application. A temperature transmitter used for general utility monitoring may perform well with an accuracy class around ±0.5% of span, while custody-related flow measurement, laboratory metrology, or emissions reporting may require much tighter limits under controlled conditions. In other words, the acceptable error band depends on whether the reading drives a trend report, a control loop, a safety shutdown, a quality release decision, or a regulatory record.

For operators and quality managers, the hidden issue is not just the stated specification on day one. It is whether the precision instrument remains stable after 3 months, 6 months, or 12 months of continuous or cyclic use. Environmental vibration, ambient temperature swings, dust, humidity, sample contamination, and electrical noise can all reduce field accuracy compared with laboratory performance. That is why technical evaluation should consider both reference accuracy and installed accuracy.

Three practical layers of accuracy judgment

A useful evaluation method is to separate precision instrument performance into 3 layers: intrinsic accuracy, process relevance, and lifecycle stability. Intrinsic accuracy is the specification under standard test conditions. Process relevance asks whether that specification supports the process decision being made. Lifecycle stability looks at recalibration interval, drift trend, maintenance burden, and the risk of data deviation during actual service.

- Intrinsic accuracy: stated error, linearity, repeatability, hysteresis, and resolution under defined laboratory conditions.

- Process relevance: whether the instrument can control a process window such as ±1°C, ±2% flow, or a gas concentration threshold used for environmental protection.

- Lifecycle stability: drift over 6–12 months, calibration frequency, spare parts availability, and the effect of installation quality on long-term performance.

This layered view helps procurement teams avoid overspending on excessive precision while preventing under-specification in safety-critical or compliance-sensitive applications. It also supports finance reviewers, because the discussion moves from price alone to total cost of ownership across a typical 3–5 year service period.

How Much Accuracy Is Needed in Different Instrumentation Scenarios?

Different sectors within the instrumentation industry apply different accuracy targets because the consequences of error are different. A laboratory balance, an online gas analyzer, a pressure gauge on a utility line, and a level transmitter in a chemical tank do not carry the same operational risk. Project managers and technical buyers should begin with the decision impact of a wrong reading rather than with catalog comparison alone.

In industrial automation, an instrument may be accurate enough if it keeps the control loop stable and supports process optimization. In environmental monitoring, the same concept extends to reportability, trend confidence, and emission reduction planning. In medical testing or laboratory analysis, tighter tolerance is often required because small deviations can affect analytical interpretation. In construction engineering, ruggedness and repeatable readings in field conditions may matter more than ultra-fine laboratory precision.

The table below summarizes how accuracy expectations often change by scenario. These are not universal limits, but practical guidance for cross-functional review among users, procurement teams, quality staff, and decision-makers.

The key insight is simple: “more accurate” is not always “more suitable.” For example, a highly sensitive instrument may require stricter environmental control, longer warm-up time, or more frequent calibration every 3–6 months. If the process only needs stable operational visibility, a robust industrial-grade model with a wider tolerance may deliver better value over time.

Where under-specification creates risk

Choosing insufficient accuracy can create direct losses in at least 4 areas: poor process optimization, unstable quality control, inaccurate environmental reporting, and delayed fault response. In gas analysis, for instance, if concentration measurement drifts beyond the acceptable threshold, operators may adjust combustion or treatment systems incorrectly, increasing energy use and affecting emission reduction targets.

For procurement teams, that means specification review should include the consequence of measurement error. A lower-cost device can become more expensive if it causes frequent recalibration, manual verification, off-spec output, or repeat inspection. This is especially true in plants running 24/7, where even a small measurement deviation can accumulate into significant operational impact.

Which Technical Parameters Matter Beyond the Accuracy Number?

When evaluating a precision instrument, many teams focus on one value, such as ±0.1% or ±0.5%. That is understandable, but incomplete. Accuracy on its own does not explain whether the device can handle startup fluctuation, low-load operation, seasonal ambient change, or contamination. In the instrumentation industry, robust selection depends on a broader parameter set that supports real field performance.

A practical review often includes at least 6 technical points: measuring range, repeatability, response time, drift, environmental compatibility, and calibration method. For industrial online monitoring, sample system design and communication integration may also matter. For an efficient gas analyzer, the ability to maintain reliable readings across expected gas composition changes, pressure variation, and continuous duty cycles can be as important as nominal precision.

Parameter checklist for technical evaluation

The table below can be used during specification review, vendor comparison, or internal approval. It helps technical evaluators and project owners translate accuracy into a measurable procurement framework.

This comparison shows why the specification sheet should never be read as a single-line answer. A device with moderate stated accuracy but strong repeatability and lower drift may outperform a more precise alternative in demanding field conditions. That can be especially important in automated systems where sensor stability directly affects control logic and process optimization.

A field-oriented review process in 4 steps

- Define the process decision linked to the measurement, such as control adjustment, pass/fail release, alarm threshold, or environmental record.

- Map the real operating conditions, including temperature range, vibration, utility stability, contamination risk, and continuous operating hours.

- Check calibration method, maintenance interval, and whether verification can be done during planned shutdowns every quarter or every 6–12 months.

- Estimate the business impact of deviation, including rework cost, downtime exposure, compliance risk, and energy loss.

This 4-step method is practical for engineering teams, purchasing departments, and business evaluators because it converts technical detail into a decision model that can be approved across operations, finance, and management.

How Should Buyers Balance Precision, Cost, and Compliance?

B2B purchasing decisions rarely depend on performance alone. A precision instrument must also fit budget limits, project schedule, installation complexity, operator capability, and future service needs. This is why the best procurement decision often comes from comparing total value rather than unit price. Financial approvers want cost transparency. Operators want reliability. Quality managers want traceability. Project leaders want predictable delivery within 2–6 weeks or a defined project window for integration.

Compliance adds another layer. In some applications, international or industry-recognized practices around calibration traceability, electrical safety, EMC compatibility, or environmental monitoring methodology influence the choice. Even when the regulation does not mandate the highest available precision, it usually requires a defensible and repeatable measurement approach. That means specification, calibration record, installation method, and verification process should align.

Common trade-offs in procurement

The following list highlights 5 procurement trade-offs that appear repeatedly across industrial manufacturing, laboratory instrumentation, and online monitoring projects:

- Higher accuracy vs. shorter calibration interval: tighter precision may increase maintenance and service planning requirements.

- Advanced analyzer vs. easier operation: a more capable instrument may require more training for operators and maintenance staff.

- Lower purchase price vs. higher lifecycle cost: savings at order stage may be offset by drift, downtime, or spare part demand.

- Broader range vs. best-point performance: a wide operating range can reduce precision at the most critical measurement point.

- Fast delivery vs. customized fit: standard models may ship in 7–15 days, while customized sample conditioning, signal output, or mounting solutions may require 2–4 weeks longer.

For many organizations, the best approach is to classify requirements into 3 levels: must-have, should-have, and optional. Must-have items usually include measurement range, minimum acceptable accuracy, compatibility with the process medium, installation constraints, and required outputs. Should-have items may include lower drift, easier calibration, or digital communication. Optional items often involve enhanced data logging or additional diagnostics.

Compliance and documentation checkpoints

Before approval, teams should confirm at least 4 documentation points: specification conditions, calibration or traceability arrangement, installation requirements, and service plan. If the instrument will support environmental protection, emission reduction, or audited reporting, it is also wise to review sample handling, zero/span verification practice, and operator recordkeeping. This reduces disputes later between procurement, site teams, and quality departments.

What Mistakes Do Companies Make When Choosing Precision Instruments?

One common mistake is assuming the smallest error specification automatically creates the best result. In reality, excessive precision can add cost without improving production, safety, or environmental outcomes. If the process variability is already larger than the instrument uncertainty, paying for ultra-high precision may not improve control performance. This happens often when teams copy requirements from another project without checking the current application.

A second mistake is ignoring installation and operating conditions. A precision instrument mounted in a high-vibration area, near electrical interference, or in a poorly conditioned sampling line may never achieve its stated laboratory performance. For gas analysis and industrial online monitoring, sample temperature, moisture, particulate load, and transport delay can influence the final reading as much as the sensor itself.

A third mistake is treating calibration as an afterthought. Even a well-selected instrument can become unreliable if verification intervals are not defined. Depending on duty cycle and process criticality, teams may schedule checks monthly, quarterly, or every 6–12 months. The exact interval should be based on risk, drift tendency, and internal quality procedures rather than on a fixed habit.

FAQ: Real questions from buyers and users

How accurate should a precision instrument be for industrial control?

It should be accurate enough to support the control action and process tolerance. If the process window is narrow, the instrument needs sufficient repeatability and low drift to avoid unstable control. If the application is general monitoring, stable trending may matter more than very tight absolute accuracy. The right answer depends on whether the reading drives adjustment, alarm, quality release, or reporting.

Is higher accuracy always better for an efficient gas analyzer?

Not always. An efficient gas analyzer should match gas range, sample condition, reporting purpose, and maintenance capacity. A higher-precision analyzer may be justified for environmental monitoring or process optimization, but only if the sampling system, calibration gases, and operating practice can support that level. Otherwise, the theoretical accuracy may not translate into field value.

What should procurement teams check before issuing an order?

At minimum, confirm 5 points: application objective, operating range, required accuracy under actual conditions, calibration and service plan, and delivery scope including cables, fittings, software, or sample handling accessories. Many procurement delays happen because teams approve the main device but miss installation or verification details.

How long is a typical delivery and implementation cycle?

Standard industrial instruments may be available in 7–15 days, while analyzers, integrated panels, or customized monitoring systems often require 2–4 weeks or longer depending on configuration, testing, and documentation. Implementation also includes commissioning, operator training, and acceptance checks, which should be planned as separate project steps.

Why Work With a Supplier That Understands Accuracy, Application, and Lifecycle Value?

Choosing the right precision instrument is not a matter of comparing catalog numbers alone. It requires application understanding across industrial manufacturing, energy and power, environmental monitoring, laboratory analysis, automation control, and project execution. A capable supplier helps you define not just the instrument specification, but the measurement objective, installation boundaries, calibration path, and operating economics over the full service life.

This is especially valuable for cross-functional teams. Information researchers need clear technical logic. Users and operators need practical reliability. Technical evaluators need parameter transparency. Procurement and business reviewers need cost-risk comparison. Decision-makers and financial approvers need confidence that the selected instrument supports compliance, energy efficiency, and process optimization without unnecessary overspending.

What you can discuss with us

If you are reviewing precision instrument requirements, we can support a structured discussion around the points that matter most in B2B projects:

- Parameter confirmation: measuring range, target accuracy, repeatability, response time, drift expectation, and operating environment.

- Product selection: comparison between standard models, industrial online monitoring options, laboratory-oriented solutions, and efficient gas analyzer configurations.

- Delivery planning: standard lead time, customized configuration window, commissioning steps, and sample or documentation support.

- Compliance review: calibration expectations, traceability approach, installation documentation, and common industry certification considerations.

- Quotation alignment: scope clarification, accessory list, service content, and lifecycle cost discussion for budgeting and approval.

If your team is comparing options for industrial control, sustainable monitoring, environmental protection, or emission reduction projects, contact us with your application conditions and measurement target. A clear technical review at the beginning can save weeks of rework later and help you choose a precision instrument that is accurate enough, operationally practical, and commercially sound.

Recommended for You

Search Categories

Search Categories

Latest Article

- IEC Approves China-Led Standard for Smart Sensor Semantic InteroperabilityIEC TR 63372 smart sensor semantic interoperability standard—led by China—cuts export certification time by 30%. Discover how it reshapes global IoT integration.Posted by:

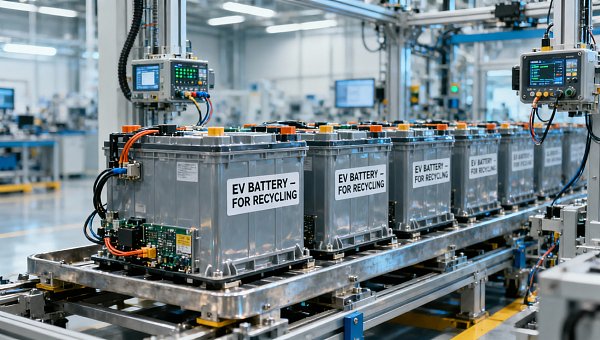

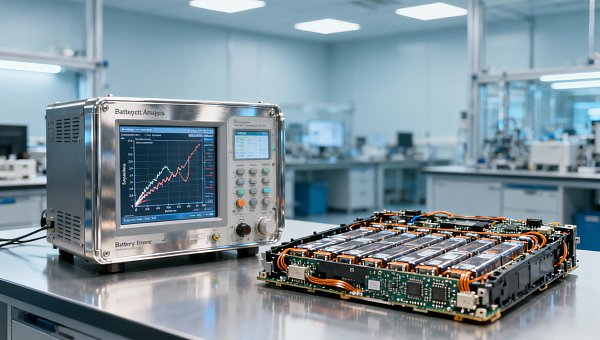

- MIIT Launches Joint Enforcement on EV Battery RecyclingMIIT's EV battery recycling enforcement targets BMS data leaks, illegal dismantling & electrolyte disposal—key for exporters, testers & procurement teams needing GDPR/CCPA compliance.Posted by:

- SAMR Launches Unified Market Action on Testing & CertificationSAMR Launches Unified Market Action on Testing & Certification—tackling fragmentation, redundant fees & cross-border recognition barriers for exporters and manufacturers.Posted by:

Please give us a message