How to Improve Process Monitoring System Results

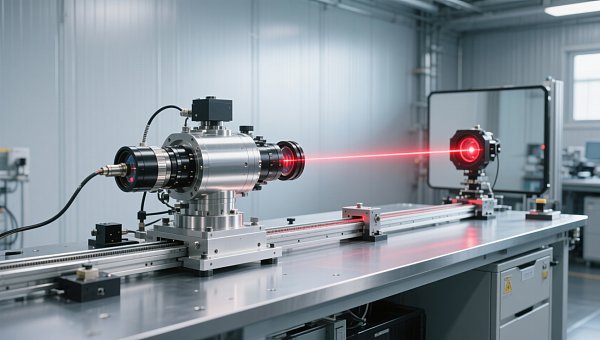

Improving process monitoring system results starts with choosing the right industrial measurement system, integrating reliable industrial control equipment, and aligning data with real production goals. From gas quality measurement and oxygen measurement system accuracy to emission measurement system performance and gas quality control, businesses can strengthen process measurement system efficiency, support emission control system compliance, and optimize every industrial control system decision.

For manufacturers, utilities, laboratories, engineering contractors, and process-intensive facilities, better monitoring results do not come from adding more instruments alone. They come from selecting fit-for-purpose sensors, building a stable signal chain, setting practical alarm thresholds, and linking data to operating, quality, safety, and financial decisions. This matters to operators who need reliable readings every shift, to quality managers who must reduce deviation, and to decision-makers who need measurable return on investment.

In the instrumentation industry, process monitoring systems support pressure, temperature, flow, level, composition analysis, calibration, industrial online monitoring, and automatic control. When these systems are well designed, plants can shorten response time from hours to minutes, lower rework risk, improve compliance records, and make maintenance more predictable. The sections below explain how to improve results across selection, implementation, operation, and continuous optimization.

Define What “Better Results” Means in Process Monitoring

Many process monitoring projects underperform because teams start with hardware lists instead of performance targets. In practice, better results usually mean 4 things: higher measurement accuracy, faster decision cycles, lower unplanned downtime, and more useful compliance records. A plant may target a temperature deviation within ±0.5°C, gas concentration repeatability within ±1% of reading, or a response time below 5 seconds for critical alarms.

Different stakeholders define success differently. Operators care about stable screens and fewer false alarms. Quality and safety managers care about traceability, calibration intervals, and threshold reliability. Financial approvers often focus on the payback period, which for monitoring upgrades is commonly assessed over 12–24 months, depending on process risk, scrap rate, and maintenance costs.

To avoid mismatch, companies should align monitoring objectives with production realities. For example, an emission measurement system in a combustion process needs different priorities than a laboratory analysis station or a water treatment flow monitoring loop. One may prioritize regulatory reporting every 15 minutes, while another needs sub-minute trend visibility for control decisions.

A practical starting point is to separate key performance indicators into process, quality, compliance, and asset-health layers. This prevents a common mistake: using one set of tags for every purpose. A process measurement system should not only collect data; it should also support action thresholds, maintenance planning, and production optimization.

Core metrics to establish before system changes

- Measurement accuracy target, such as ±0.25%, ±0.5%, or ±1% depending on the variable and the process criticality.

- Data availability target, often 98%–99.5% for standard industrial monitoring and higher for critical compliance loops.

- Alarm response window, for example 3–10 seconds for fast processes and 30–120 seconds for slower thermal systems.

- Calibration frequency, such as monthly, quarterly, or every 6 months based on drift risk and operating conditions.

- Reporting interval, such as real-time, 1-minute logs, or 15-minute compliance summaries.

Typical result indicators by application

The table below helps teams define realistic monitoring outcomes across common industrial scenarios. It is useful during early-stage evaluation, budget discussions, and site surveys.

The main conclusion is that process monitoring system results improve faster when success is defined numerically and by application. A generic goal such as “better visibility” is rarely enough to guide instrument selection, control integration, or maintenance planning.

Select the Right Instrumentation and Control Architecture

The quality of monitoring results depends heavily on the full measurement chain: sensor, transmitter, signal transmission, control platform, data historian, and operator interface. If one part is weak, overall performance drops. For instance, a high-grade oxygen measurement system can still produce poor decisions when sample lines are too long, response is delayed by 20–30 seconds, or scaling in the control system is incorrect.

Selection should begin with process conditions rather than catalog preference. Pressure range, temperature extremes, vibration, dust, humidity, corrosive media, and required cleaning cycles all affect instrument suitability. In many industrial environments, a device that performs well in a laboratory can fail quickly in a 24/7 production line if ingress protection, enclosure material, or installation position is not matched properly.

A second key decision is architecture. Some sites need a centralized industrial control system with unified dashboards and historian functions. Others benefit from distributed monitoring nodes near equipment clusters. For plants with 50–200 measurement points, centralized visibility can simplify quality review. For large facilities with multiple process areas, a hybrid structure often improves resilience and maintenance speed.

Buyers should also assess how the system handles calibration, redundancy, and diagnostics. In critical loops, adding a second analyzer or transmitter can reduce data loss risk during maintenance. In lower-risk applications, built-in diagnostics, event logging, and sensor health alerts may be enough to maintain acceptable reliability without doubling hardware cost.

Selection priorities that improve results

- Match measurement principle to the medium and process dynamics, not just to the nominal range.

- Check installation conditions, including straight pipe length, sampling path, power quality, and ambient temperature.

- Confirm communication compatibility with PLC, DCS, SCADA, or industrial IoT platforms.

- Review maintenance burden, including consumables, calibration gas needs, probe cleaning, and spare parts lead time.

- Evaluate lifecycle support over 3–5 years, not only the initial purchase price.

Comparison of common architecture choices

Before procurement, many teams compare architecture options mainly on budget. A more useful approach is to compare how each setup affects accuracy, responsiveness, serviceability, and compliance readiness.

For most B2B buyers, the best choice is not the most complex system. It is the one that aligns process criticality, data use cases, and service capacity. A simpler architecture with correct sensors and disciplined maintenance often outperforms an overbuilt system that is difficult to sustain.

Improve Data Quality, Alarm Logic, and Operational Usefulness

Even well-selected instrumentation can deliver disappointing results if the data is noisy, delayed, or poorly interpreted. Data quality problems often come from sensor drift, unstable sampling, electrical noise, poor grounding, scaling errors, or missing compensation factors. In gas quality control and emission control systems, these issues may not be obvious until a batch fails, a report is questioned, or a trend becomes impossible to trust.

A strong process monitoring system should distinguish between raw values, validated values, and actionable values. Raw signals are useful for diagnostics, but operators usually need filtered and contextualized information. For example, a flow signal may need temperature compensation, and oxygen readings may need correlation with load, fuel quality, or excess air settings before they support meaningful process decisions.

Alarm management is another major improvement area. Too many plants still use fixed thresholds that trigger frequent nuisance alarms. A better approach is to classify alerts into at least 3 levels: advisory, action-required, and critical shutdown-related. This makes it easier for operators to prioritize, reduces alarm fatigue, and improves response discipline during busy operating periods.

Trend design also matters. Instead of displaying 30 variables on one crowded screen, create role-based views. Operators need immediate process deviations. Quality personnel need batch or shift summaries. Managers need daily or weekly exception-based dashboards. This segmentation improves usability and turns monitoring data into operational action rather than passive storage.

Practical methods to improve data usefulness

- Validate signal scaling during commissioning and again after any PLC, DCS, or transmitter replacement.

- Use timestamp consistency checks when integrating analyzers, remote I/O, and historians from different vendors.

- Set deadbands and alarm delays where the process naturally fluctuates, often in the 2–10 second range for fast loops.

- Create at least 2 dashboard layers: shift-level operation and management-level exception review.

- Log maintenance events together with process data so drift, cleaning, and recalibration can be linked to trend changes.

Common causes of poor monitoring results

The following table highlights where system performance is often lost after installation. These points are especially relevant for plants upgrading legacy industrial control equipment.

The most important takeaway is that monitoring results improve when data is engineered for action. Cleaner values, smarter alarms, and clearer dashboards often deliver faster benefit than adding more measurement points.

Strengthen Implementation, Calibration, and Lifecycle Support

A process monitoring upgrade succeeds only when implementation is controlled from design through handover. In many industrial projects, 20% of the result comes from product choice and 80% from engineering detail, installation quality, commissioning discipline, and post-startup optimization. Poor cable routing, missing tagging, incomplete loop checks, or undocumented setpoint changes can quickly reduce expected benefits.

A reliable implementation plan usually follows 5 stages: site survey, design confirmation, installation, commissioning, and performance review after 30–90 days of operation. For larger systems, additional factory acceptance and site acceptance activities may be needed. These checkpoints are especially important where emission measurement systems, oxygen analyzers, or gas quality measurement loops support compliance and safety-related actions.

Calibration strategy should also be defined in advance. Some instruments need daily functional checks, while others can run for 3–6 months between routine calibration events. The right interval depends on drift characteristics, process contamination, operating temperature swings, and criticality of the monitored variable. Skipping this planning stage often leads to higher lifecycle cost than the original procurement team expected.

Service support is another decision factor for project managers and distributors. Spare parts availability, response time, remote diagnostics capability, and training materials all affect long-term results. A system that is easy to maintain with standard tools and clear documentation often delivers better uptime than a more advanced platform that only a small number of specialists can support.

Recommended implementation checklist

- Complete a site condition review covering process media, ambient environment, access, utilities, and signal routing.

- Confirm I/O mapping, tag naming, engineering units, scaling, and alarm priorities before startup.

- Perform loop testing for 100% of critical points and spot-check noncritical channels before handover.

- Document baseline values during the first 7–14 days to identify drift, fouling, or unstable process behavior.

- Schedule refresher training for operators and maintenance personnel within the first 30 days after commissioning.

Typical lifecycle planning factors

The table below can help procurement teams and engineering leads compare system support requirements before approval. It is especially useful when evaluating total cost beyond the initial hardware quote.

When companies plan implementation and support in this level of detail, process monitoring system results become more stable over time. This is often where the difference appears between a system that performs well for 3 months and one that remains reliable for 3 years.

Common Buying Questions, Risks, and Next-Step Guidance

For researchers, distributors, project leaders, and decision-makers, purchasing a process monitoring system involves more than comparing quotations. The right evaluation should cover technical fit, service scope, integration complexity, operating cost, and risk exposure. In many projects, the lowest upfront price is not the lowest total cost once calibration time, false alarms, unplanned shutdowns, and reporting gaps are considered.

A frequent risk is underestimating application detail. Gas quality measurement, oxygen analysis, emission monitoring, and industrial online control each have different demands for response time, sample handling, and verification routines. Another risk is buying a capable system without assigning ownership. If no one is responsible for alarm review, calibration status, and trend analysis, even strong instrumentation may produce weak outcomes.

For commercial teams and financial approvers, it helps to evaluate projects using 4 lenses: operational impact, compliance relevance, maintenance workload, and upgrade scalability. This framework supports decisions whether the site is replacing obsolete instruments, expanding capacity, or building a new digital monitoring layer around an existing industrial control system.

The most successful projects usually begin with a clear problem statement: reduce quality deviation, improve emission control system reliability, stabilize oxygen measurement, or shorten fault response time. From there, teams can request a more precise proposal, site survey, or phased upgrade path instead of purchasing equipment in isolation.

FAQ: How should buyers compare system proposals?

Compare proposals by measurement fit, integration method, maintenance demand, included commissioning scope, documentation depth, and spare parts planning. At minimum, ask for a point list, communication plan, calibration expectation, startup scope, and recommended consumables for the first 12 months.

FAQ: Which applications need the most attention to data quality?

Applications involving gas quality control, oxygen measurement systems, emission measurement systems, and safety-related variables usually need tighter data discipline. These often require stronger sample conditioning, documented calibration routines, and better alarm logic than general utility monitoring.

FAQ: What are the most common implementation mistakes?

The most common mistakes are unclear success metrics, poor installation detail, no baseline data collection after startup, weak operator training, and missing maintenance ownership. These issues often appear within the first 30–60 days and can usually be corrected if they are reviewed early.

FAQ: How long does a typical upgrade take?

Small upgrades may be completed in 1–3 weeks including configuration and site testing. More complex projects involving analyzers, industrial control equipment integration, or multi-area deployment may require 4–12 weeks depending on engineering depth, shutdown windows, and commissioning needs.

Better process monitoring results come from disciplined system design, application-matched instrumentation, usable data, and dependable lifecycle support. Whether your priority is gas quality measurement, oxygen control, emissions compliance, or broader industrial process measurement, a structured evaluation can reduce risk and improve long-term performance. If you are planning a new project or upgrading an existing monitoring platform, contact us to discuss your operating conditions, compare solution options, and get a customized recommendation for your site.

Recommended for You

Search Categories

Search Categories

Latest Article

- First Global White Paper on Humanoid Robot Industrial Calibration ReleasedHumanoid robot industrial calibration: First global white paper released—key for sensor makers, integrators & metrology labs. Learn impact & action steps.Posted by:

- FDA Updates IVD Import Guidance: CNAS Calibration Chain RequiredFDA mandates CNAS calibration chain with NIST traceability for IVD instruments from China—act now to avoid U.S. import rejection starting July 1, 2026.Posted by:

- IEC TC65 Releases Cybersecurity Validation Guidance for Industrial InstrumentsIEC TC65 releases cybersecurity validation guidance for industrial instruments—key for exporters, OEMs & integrators targeting EU/NA markets. Act now.Posted by:

Please give us a message