A CO analyzer may pass calibration and still miss real risk

A CO analyzer can pass calibration yet still fail to reveal real process or safety risk when field conditions shift. For operators, safety managers, project leaders, and buyers comparing a hydrogen analyzer, NH3 analyzer, NOX analyzer, SO2 analyzer, CH4 analyzer, CO2 analyzer, infrared gas analyzer, or oxygen analyzer, understanding this gap is critical to choosing equipment that supports reliable monitoring, compliance, and sound investment decisions.

Why a calibrated CO analyzer can still miss danger in real operations

In instrumentation projects across manufacturing, energy, environmental monitoring, laboratories, and automation systems, calibration is often treated as proof of reliability. In practice, calibration confirms performance only under defined conditions, over a defined range, and at a defined time. A CO analyzer may pass a bench check in 15–30 minutes and still deliver misleading readings once ambient temperature, humidity, pressure, sample flow, or interfering gases change in the field.

This gap matters because carbon monoxide risk is rarely static. Process startups, load changes, combustion instability, ventilation failure, and mixed gas streams can all create short-duration excursions. If the analyzer response lags by 20–60 seconds, drifts between service intervals, or cross-reacts with hydrogen or hydrocarbons, operators may see a compliant-looking value while real exposure or process upset is already developing.

For safety managers and quality teams, the issue is not only whether the instrument reads correctly in a calibration cylinder test. The real question is whether it preserves accuracy at the point of use, within the expected concentration band, and over the full operating cycle. In many facilities, that means continuous duty for 8–24 hours per day, seasonal temperature variation, and multiple upset scenarios rather than one stable laboratory condition.

For commercial evaluators and financial approvers, the hidden risk is total cost. A lower-priced analyzer that requires more frequent recalibration, replacement of filters every few weeks, or repeated troubleshooting can become more expensive over 12–36 months than a more robust system. This is especially true in distributed monitoring networks where downtime, false alarms, and manual verification consume labor and delay decisions.

What calibration confirms, and what it does not

A calibration event usually confirms zero point, span point, and instrument response against a reference gas. It does not automatically validate sample conditioning efficiency, line losses, sensor poisoning resistance, field vibration tolerance, or the analyzer’s behavior under rapid concentration swings. In other words, calibration is necessary, but it is only one layer of risk control.

- A passed calibration shows the analyzer matched a known reference during the test window, often at 1–2 points rather than across the entire operating range.

- It does not prove immunity to cross-sensitivity from gases such as H2, CH4, NOX, SO2, or NH3 in mixed industrial streams.

- It does not ensure the sample transport system avoids condensation, leakage, adsorption, or particulate loading over weeks or months.

- It does not guarantee the response time and alarm logic are fast enough for process safety or confined-space protection.

Which field conditions create the biggest measurement gap

The instrumentation industry supports digital transformation and intelligent upgrading, but field gas analysis still depends on practical details. A CO analyzer installed near a boiler, furnace, reformer, engine exhaust, tunnel ventilation point, laboratory process hood, or environmental stack sees far more variation than a calibration bench. The most common gap appears when the process matrix changes faster than the instrument or sample system can adapt.

Temperature and moisture are two major causes. If sample gas cools below its dew point in the transport line, water can absorb soluble components, block filters, or alter gas concentration before it reaches the sensor. In many projects, a 5°C–15°C drop along the sample path is enough to change conditioning behavior. For infrared gas analyzer systems, dirty optics or condensation may also affect signal stability and increase maintenance frequency.

Cross-interference is another major source of false confidence. In hydrogen-rich processes, a CO analyzer may behave differently than expected if the sensing principle is not suited to the matrix. The same procurement review often includes a hydrogen analyzer, oxygen analyzer, CO2 analyzer, NH3 analyzer, NOX analyzer, SO2 analyzer, or CH4 analyzer because buyers need a full picture of combustion efficiency, emissions control, or process composition. One analyzer cannot be judged in isolation.

Response dynamics also matter. A sensor that is acceptable for trend monitoring may be too slow for interlock support or emergency warning. If the application requires action within 10–30 seconds, the full loop must be reviewed: probe, filter, heated line if used, pump, conditioning module, analyzer cell, and controller logic. Project managers often underestimate this chain and focus only on the analyzer specification sheet.

Typical field risk factors to review before purchase

The table below helps operators, quality personnel, and procurement teams compare common causes of missed CO risk and the related verification point during selection or site acceptance.

This comparison shows why a passed calibration certificate should never be the only approval document. A stronger acceptance approach includes at least 3 layers: bench calibration review, site condition review, and dynamic process verification during startup or simulated upset.

A practical 4-step validation routine

- Define the true operating matrix, including normal gas composition, upset gases, moisture level, and expected temperature band.

- Match the sensing principle and sample system to that matrix rather than to catalog generalities.

- Test response time and repeatability under at least 2–3 realistic concentration changes, not one static point only.

- Set maintenance and recalibration intervals based on contamination risk and operating hours, not only on supplier default guidance.

How to compare a CO analyzer with related gas analysis needs

Many B2B buyers do not purchase a CO analyzer alone. They compare it within a wider gas analysis package that may include a hydrogen analyzer for process purity, an oxygen analyzer for combustion control, a CO2 analyzer for efficiency balance, or NH3, NOX, and SO2 analyzers for emissions and treatment performance. This broader view improves selection because the process objective, not just the instrument list, becomes clear.

For operators, the decision centers on reliability and usability. For commercial evaluators, it is compatibility with project scope. For finance teams, it is lifecycle cost over 1–3 years. For distributors and agents, it is serviceability, spare availability, and whether one platform can cover multiple customer sectors such as industrial manufacturing, power, environmental monitoring, medical testing, laboratory analysis, or construction-related safety monitoring.

Technology choice should reflect the gas matrix and the decision consequence. An infrared gas analyzer may work well for certain composition measurements, while electrochemical or other principles may be preferred in portable safety applications. The best answer depends on concentration range, interference profile, sampling method, and whether the goal is process optimization, regulatory reporting, quality assurance, or worker safety.

The table below summarizes how buyers commonly position different analyzer types during early project evaluation. It is not a replacement for application engineering, but it is a useful first filter when reviewing 3–5 supplier proposals.

The procurement lesson is simple: compare by use case, not by label. Two analyzers with similar headline accuracy may create very different operating outcomes if one is easier to maintain, more tolerant of contamination, or better integrated into the plant control architecture.

Three questions procurement teams should ask suppliers

- What is the expected performance under our actual gas matrix, including interference gases and moisture level, rather than under generic calibration conditions?

- What are the normal service intervals for filters, sensors, optical parts, or pumps under continuous operation?

- How long are typical lead times for standard configuration and for customized sampling or enclosure options, such as 2–4 weeks versus longer engineered delivery?

What to check before approval: specification, compliance, cost, and implementation

A sound analyzer purchase in the instrumentation sector should be evaluated through at least 5 dimensions: measurement fit, environmental fit, integration fit, compliance fit, and service fit. This approach works well for project leaders and engineering managers because it reduces late-stage change orders. It also helps financial approvers see why a specification-based purchase can fail if installation and maintenance assumptions were not priced from the start.

Measurement fit means more than stated accuracy. Buyers should confirm range, repeatability, response time, warm-up period, drift behavior, and required calibration frequency. Environmental fit includes enclosure suitability, vibration tolerance, ambient temperature range, ingress protection level where relevant, and whether hazardous-area requirements apply. In many industrial projects, these points matter more than small differences in catalog precision.

Compliance fit should be reviewed against the application, not assumed. Depending on industry and region, projects may need alignment with safety practices, metrology procedures, emissions monitoring requirements, or internal quality systems. Even when no single named certification is mandatory, documentation quality matters: calibration procedure, traceability approach, maintenance manual, wiring information, and site acceptance records all influence audit readiness.

Implementation fit often determines whether the system succeeds in the first 30–90 days. A capable analyzer with poor commissioning support can create delays in cable termination, control logic setup, sample line routing, or training. Distributors and agents should pay close attention to spare kit planning, commissioning scope, and whether local service can handle troubleshooting without waiting weeks for remote support.

A practical approval checklist for B2B projects

The checklist below is useful during technical clarification, bid comparison, and pre-purchase approval. It converts a broad discussion into reviewable items for engineering, safety, procurement, and finance.

Recommended for You

Search Categories

Search Categories

Latest Article

- New China Battery Recycling Rules Effective May 6, 2026New China battery recycling rules (effective May 6, 2026) mandate traceability codes & EPR declarations for BMS sensors, thermal controllers, and wireless modules — critical for EU/Korea exports. Act now to avoid supply chain disruption.Posted by:

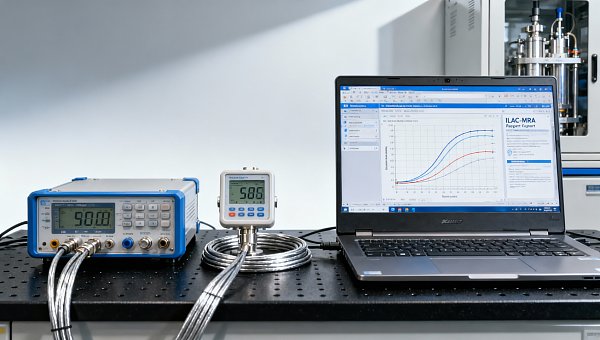

- PTB Launches CalCloud AI for ILAC-MRA Remote CalibrationPTB Launches CalCloud AI for ILAC-MRA Remote Calibration — enabling real-time, standards-compliant pressure, temperature & electrical calibration with instant ILAC-MRA reports.Posted by:

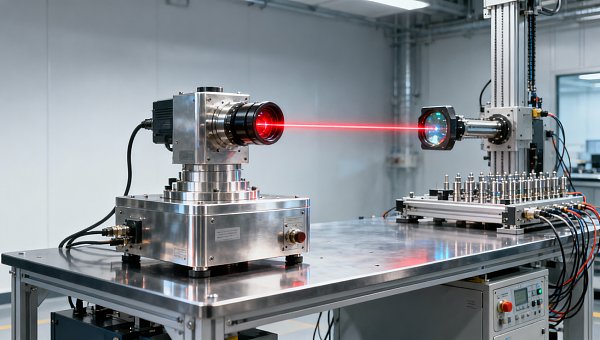

- US BIS Adds 12 High-Precision Calibration Devices to Export Control ListUS BIS adds 12 high-precision calibration devices to export control list—impacting semiconductor, aerospace & metrology firms. Get urgent compliance insights now.Posted by:

Please give us a message