How to Compare Custom Gas Analysis Options for Complex Process Streams

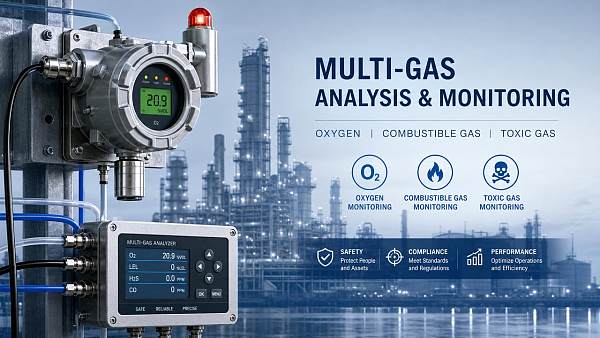

Selecting the right custom gas analysis solution for complex process streams requires more than comparing datasheets. Technical evaluators must balance measurement accuracy, response time, sample conditioning, hazardous-area compliance, and long-term maintenance demands.

This guide explains how to compare custom gas analysis options systematically, helping you identify the most reliable configuration for demanding industrial, environmental, and process-control applications.

What technical evaluators actually need to compare first

The core search intent behind custom gas analysis is practical evaluation. Readers are not looking for a generic definition. They need a structured way to compare options for a real process stream.

For technical evaluators, the first question is usually not which analyzer is most advanced. It is which configuration will produce dependable data under actual operating conditions, with acceptable risk and service burden.

That changes the comparison process. Instead of starting with technology names alone, start with stream characteristics, application objectives, performance limits, site constraints, and lifecycle demands.

If you compare custom gas analysis systems in that order, weak options become visible quickly. A technically impressive analyzer may still fail because of sample handling, contamination, pressure variation, or maintenance complexity.

Start with the process stream, not the analyzer brochure

Complex process streams rarely behave like clean calibration gas. They may contain moisture, corrosives, particulates, condensable vapors, heavy hydrocarbons, variable flow, or rapidly changing compositions.

That is why stream definition is the foundation of a useful comparison. Before evaluating suppliers, document normal composition, upset composition, pressure, temperature, flow regime, background gases, and expected contaminants.

Also define whether the stream is continuous, batch-based, cyclic, or transient. A custom gas analysis solution that performs well on stable composition can struggle when the stream changes quickly.

Technical evaluators should ask whether the analyzer must support process control, safety interlock logic, emissions compliance, product quality verification, or research-grade characterization. Each purpose changes acceptable uncertainty and response time.

If the use case is process control, speed and repeatability may matter more than the lowest detection limit. If the use case is compliance, traceability, validation, and documented performance may matter more.

Match the measurement principle to the gases and the interference profile

Most comparison mistakes happen when users focus on a preferred technology before reviewing interferences. In custom gas analysis, measurement principle must fit both the target gas and the surrounding matrix.

Common options include NDIR, FTIR, TDLAS, paramagnetic oxygen, zirconia oxygen, thermal conductivity, flame ionization detection, electrochemical sensors, mass spectrometry, and gas chromatography.

Each method has strengths and failure points. FTIR handles multicomponent analysis well, but overlapping spectra can complicate low-level measurement in mixed matrices. TDLAS offers fast response and selectivity, but only for suitable species.

Gas chromatography can provide strong component separation and high confidence for complex mixtures, but cycle time, carrier gas needs, and system complexity may limit suitability for fast control loops.

Electrochemical or simple sensor-based solutions can look attractive for cost reasons, yet they often introduce drift, shorter service life, and narrower operating windows in aggressive industrial streams.

The right question is not which principle is best overall. It is which principle maintains reliable selectivity, detection capability, and stability in your actual process matrix over time.

Compare measurement performance in the context of the application

Accuracy alone is not enough to compare custom gas analysis options. Technical evaluators should review repeatability, linearity, drift, rangeability, zero stability, sensitivity to background composition, and validated uncertainty.

Ask vendors to define performance under installed conditions, not only under laboratory conditions. Environmental temperature swings, vibration, sample lag, and pressure control often change real-world performance materially.

Response time also needs careful interpretation. Some suppliers cite analyzer cell response, while the full system delay is dominated by probe design, transport line length, filters, and conditioning stages.

For critical applications, request total system response from extraction point to final output signal. That figure is far more relevant than the analyzer module response listed in a catalog.

When comparing ranges, check whether one solution covers the full operating envelope directly or relies on switching ranges, dilution, or multiple analyzers. Added complexity can affect reliability and maintenance.

Sample conditioning often determines whether the system succeeds or fails

In many installations, the analyzer is not the main problem. The sample handling system is. Poor conditioning can create bias, slow response, plugging, condensation, adsorption, or complete loss of representative sampling.

Technical evaluators should compare probe design, sample extraction location, heated lines, pressure regulation, filtration stages, moisture removal method, materials of construction, and flow management.

The key challenge is preserving representativeness while making the sample measurable. Removing moisture, for example, may protect equipment but can also remove soluble target compounds or shift equilibrium.

Similarly, dropping temperature can condense heavy components and distort composition. Reactive gases may adsorb on tubing or filter surfaces if materials are chosen poorly.

Ask vendors to explain how the conditioning design prevents sample alteration. A credible supplier should discuss dew point margins, transport velocity, dead volume, corrosion resistance, and maintenance access in detail.

For dirty or wet streams, also compare how each design handles fouling. Automatic blowback, self-cleaning probes, bypass loops, and modular filters may significantly reduce downtime and operator intervention.

Hazardous area compliance and installation constraints must be evaluated early

Many technically sound analyzer proposals fail late because they do not fit the site classification, utility availability, enclosure requirements, or mounting space. These constraints should be screened at the beginning.

Review whether the installation area requires explosion-proof, flameproof, purged, intrinsically safe, or other compliant configurations. Certification gaps can create delays, redesign costs, and approval issues.

Also compare power requirements, shelter needs, ambient temperature tolerance, analyzer house ventilation, calibration gas storage needs, and interface compatibility with the plant control system.

If the stream is extracted from a hazardous or high-pressure process, probe isolation, fail-safe valves, relief design, and maintenance isolation procedures become part of the evaluation, not optional extras.

Evaluate lifecycle maintenance, not just purchase price

Technical evaluators usually know that low purchase price does not always mean low total cost. In custom gas analysis, maintenance frequency and skill requirements can dominate lifecycle economics.

Compare calibration intervals, consumables, sensor replacement frequency, carrier or purge gas demand, filter service needs, and required cleaning procedures. Ask what can be maintained online and what requires shutdown.

It is also useful to review mean time between failures, expected wear components, remote diagnostics capability, spare parts availability, and local service support. These factors directly affect uptime.

A more expensive design may still be the better option if it reduces manual intervention, avoids false readings, and improves reliability in harsh service. For most industrial users, dependable data has higher value than theoretical savings.

Make vendors state the assumed maintenance environment. A design suitable for a staffed laboratory may not be realistic for an outdoor remote installation with limited technician access.

Ask better vendor questions to reveal hidden risk

Good comparison work depends on good questions. Instead of asking only for analyzer specifications, ask vendors to describe the full measurement chain and identify the dominant failure modes for your stream.

Request examples from comparable applications, including stream composition, contamination load, operating pressure, ambient conditions, and achieved maintenance intervals. Similar references are more useful than broad market claims.

Ask where measurement error is most likely to originate: probe, transport line, conditioning panel, analyzer drift, calibration strategy, or software compensation. Mature suppliers answer this clearly.

You should also ask what assumptions the proposal depends on. For example, does stated performance require a stable dew point, clean sample, shelter installation, frequent calibration, or operator attention beyond your available resources?

Another useful question is what happens during upset conditions. Can the system recover automatically after condensate, pressure shocks, particulate loading, or concentration spikes, or will manual service be required?

Build a comparison matrix that reflects real project priorities

A disciplined matrix helps prevent decisions based on one attractive feature. Score each custom gas analysis option across process compatibility, selectivity, full-system response, conditioning robustness, safety compliance, maintenance burden, and support quality.

Weight the criteria according to application purpose. A compliance application may prioritize traceability and auditability. A process-control application may prioritize uptime and response speed. A hazardous unit may prioritize safety architecture.

Include commercial factors, but keep them in context. Capital cost, lead time, and vendor support matter, yet they should not outweigh technical fit for a difficult stream.

It is often helpful to separate mandatory requirements from scored preferences. If a solution cannot maintain representative sampling or lacks required certification, it should be screened out before detailed scoring.

This method gives technical evaluators a defensible basis for recommendation. It also makes cross-functional review easier when operations, engineering, EHS, procurement, and management all have different concerns.

Common mistakes when comparing custom gas analysis solutions

One common mistake is comparing analyzer modules without comparing the sample system. Another is assuming a successful application in one plant will transfer directly to a different process matrix.

Some teams overvalue very low detection limits that are irrelevant to control decisions, while undervaluing response delay, fouling resistance, or calibration stability that affect daily operation much more.

Another frequent issue is accepting broad accuracy claims without clarifying basis conditions, interference effects, or system-level uncertainty. This can lead to unrealistic expectations after commissioning.

It is also risky to treat maintenance as a secondary topic. In complex streams, service access, technician workload, and contamination management should be central comparison criteria from the start.

How to reach a confident selection decision

The best custom gas analysis choice is usually the one that matches the stream realistically, preserves sample integrity, meets the decision-making need, and remains maintainable in the actual site environment.

For technical evaluators, that means comparing complete systems rather than analyzer technologies in isolation. The strongest proposal is often the one with the clearest treatment of sample handling, failure modes, and lifecycle support.

If uncertainty remains, consider requesting a formal application review, sample characterization support, or pilot validation for the most difficult streams. Upfront evaluation effort is usually cheaper than field redesign later.

In short, the right way to compare custom gas analysis options is systematic and application-driven. Define the stream, match the principle, verify conditioning, confirm compliance, and judge long-term operability before price becomes decisive.

When those elements are evaluated together, you can select a solution that delivers not only measured numbers, but reliable information the process can actually trust.

Recommended for You

Search Categories

Search Categories

Latest Article

- First Global White Paper on Dynamic Calibration for Humanoid Robot Sensors ReleasedFirst global white paper on dynamic calibration for humanoid robot sensors—key for pressure & 6-axis force/torque accuracy in smart manufacturing and collaborative robotics.Posted by:

- FDA Updates IVD Import Guidelines: CNAS/NIST Calibration Chain RequiredFDA mandates CNAS/NIST calibration chain for IVD pressure & temperature sensors—key for Chinese exporters. Act now to meet July 2026 deadline.Posted by:

- EU PEF Carbon Database Mandatory Access for Chinese Industrial InstrumentsEU PEF Carbon Database mandatory for Chinese industrial instruments—meet June 15, 2026 deadline to keep CE marking & win EU tenders.Posted by:

Please give us a message