Why Monitoring System Data Looks Fine but Misses Risk

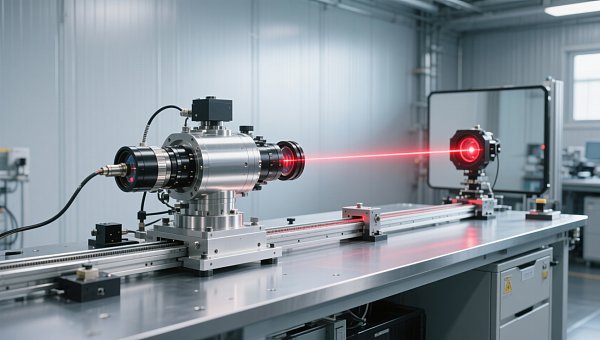

A monitoring dashboard can look perfectly normal while real risk is already building in the process. That is not a contradiction; it is a common failure mode in industrial environments. A safety control analyzer, emission control analyzer, or process monitoring analyzer may still display acceptable values even when sensor drift, sampling problems, enclosure leakage, calibration gaps, or slow process changes are reducing reliability. For operators, engineers, buyers, and decision-makers, the key lesson is simple: “normal data” does not always mean “low risk.” The real question is whether the monitoring system is sensitive, verified, and contextualized enough to detect early deviation before it becomes a safety, quality, compliance, or cost problem.

Why “normal readings” can still hide serious operational risk

Many teams assume that if industrial analysis equipment is online, stable, and reporting values within threshold, then the process is under control. In practice, this assumption is often too optimistic. Monitoring systems do not directly show “truth”; they show measured signals generated by sensors, sampling paths, analyzers, logic rules, and operating conditions. If any of those layers is compromised, the displayed data may look fine while the actual process condition is moving in the wrong direction.

This issue is especially important in gas analysis equipment and industrial online monitoring systems used for emissions, combustion control, process safety, environmental compliance, and quality assurance. A reading can remain stable because the instrument is stable, not because the process is healthy. That difference matters.

For most target readers, the core search intent behind this topic is clear: they want to understand why monitoring systems fail to warn early, how to identify blind spots, and what criteria to use when evaluating equipment, maintenance practice, or monitoring strategy.

What risks matter most to operators, engineers, buyers, and decision-makers

Different stakeholders look at the same monitoring system from different angles, but their concerns are connected.

Operators and users care about whether the analyzer reflects real process conditions, whether alarms are trustworthy, and how to avoid being blamed for “missing” a problem that the system never clearly showed.

Technical evaluators and quality or safety managers focus on measurement integrity, calibration stability, response time, sampling reliability, enclosure protection, analyzer suitability, and whether the data is defensible during audits or investigations.

Procurement teams, finance approvers, and business evaluators want to know whether a lower-cost instrument creates hidden lifecycle cost through false confidence, noncompliance, maintenance burden, downtime, or poor decision support.

Project managers and enterprise decision-makers are usually asking a broader question: can this monitoring architecture reduce operational risk, support compliance, and improve decisions without creating excessive maintenance complexity?

That is why the most helpful content is not a generic description of analyzers. What helps most is a practical explanation of failure mechanisms, warning signs, and evaluation criteria.

How monitoring systems miss early warnings even when the analyzer appears healthy

There are several common reasons a process monitoring analyzer or emission control analyzer can look normal while risk accumulates.

Sensor drift

Sensors can drift gradually over time due to aging, contamination, thermal stress, or exposure to harsh media. Because drift often happens slowly, daily operators may not notice it. The instrument still responds, trends still look smooth, and values may remain inside acceptable limits, but the reference point has shifted. That means the system is consistently wrong in a believable way.

Sampling system problems

In many gas analysis equipment applications, the biggest weakness is not the analyzer core but the sample path. Blockages, condensation, leaks, dead volume, pump wear, filter saturation, or incorrect sample conditioning can distort the sample before it reaches the analyzer. The analyzer may function correctly, but it is analyzing a compromised sample.

Threshold-based logic that is too simple

Many systems rely on fixed alarm limits. But risk does not always begin when a value crosses a line. Slow upward drift, unusual variability, lagging response, cross-parameter inconsistency, or repeated short deviations may indicate trouble earlier than a standard high or low alarm.

Wrong analyzer selection for the application

A safety control analyzer designed for one gas range, humidity condition, or process dynamic may not be suitable for another. If the measurement principle, detection range, response characteristics, or material compatibility do not match the operating environment, the system may look reliable while underperforming where it matters most.

Enclosure and environmental issues

Heat, vibration, dust, moisture ingress, corrosive atmosphere, and poor panel design can degrade industrial analysis equipment long before complete failure appears. Enclosure problems are especially dangerous because they often create intermittent or gradual measurement errors rather than obvious instrument shutdowns.

Calibration routines that confirm too little

A calibration check may verify one point under controlled conditions, yet miss nonlinear response, dynamic error, or application-specific interference. A system can “pass calibration” and still fail to provide reliable early warning in real operation.

Why hidden monitoring risk becomes a business problem, not just a technical one

When monitoring data misses risk, the consequences go beyond instrumentation performance.

Safety exposure increases. In critical processes, delayed detection can allow combustible, toxic, pressure-related, or thermal risk to develop too far before intervention.

Compliance risk grows quietly. In environmental and emissions applications, incorrect readings may lead to underreporting, late corrective action, failed audits, or regulatory penalties. A stable-looking emission control analyzer is valuable only if the measured data remains representative and traceable.

Product quality can drift off target. In manufacturing and laboratory-related operations, small undetected deviations in flow, composition, temperature, or process condition may create batch inconsistency, scrap, or customer complaints.

Operating decisions become weaker. If managers and engineers trust incomplete data, they may delay maintenance, optimize against the wrong baseline, or approve process changes based on false confidence.

Total cost rises. The financial impact often appears later in the form of downtime, repeat testing, emergency service, energy waste, excess consumables, nonconformance handling, and shortened equipment life.

For finance and procurement stakeholders, this is a key point: the cheapest monitoring solution may have the highest cost if it fails to surface risk early.

What to check when you suspect the data looks better than reality

If a monitoring system appears stable but field conditions suggest otherwise, teams should review the full measurement chain rather than only the displayed value.

Compare analyzer data with process behavior. Do instrument readings match what operators observe in production quality, combustion behavior, emissions trends, equipment condition, or energy performance? If not, the issue may be hidden in the measurement system.

Review drift history, not just latest calibration status. A single successful check says less than a trend of repeated adjustments over time. Frequent correction is a warning signal even if the system remains “in tolerance.”

Inspect the sample handling system. Filters, probes, lines, pumps, dryers, coolers, and regulators deserve as much attention as the analyzer module itself.

Check response time under real operating change. An analyzer that is accurate in static conditions may respond too slowly during process transition, where early warning is most needed.

Look for cross-variable inconsistency. If flow, pressure, temperature, composition, or emissions values do not make sense together, the problem may be measurement integrity rather than process instability alone.

Evaluate enclosure and installation conditions. Panel temperature, sealing, ventilation, cable routing, vibration isolation, and contamination control can all affect reliability.

Ask whether alarm logic detects patterns, not only limits. Rate of change, drift rate, mismatch detection, signal quality indicators, and analyzer health diagnostics can reveal earlier warning than basic threshold alarms.

How to evaluate industrial analysis equipment beyond headline specifications

When selecting or upgrading industrial analysis equipment, buyers and technical evaluators should go beyond nominal accuracy claims.

Application fit matters more than brochure precision. The right question is not only “How accurate is it?” but “How accurate is it in this process, with this sample, under these environmental conditions, across this maintenance interval?”

System design should support representative measurement. For gas analysis equipment especially, probe location, transport distance, conditioning design, and anti-condensation strategy can be as important as analyzer technology.

Maintainability affects real reliability. If routine verification is difficult, filters are hard to replace, or diagnostics are unclear, performance will degrade faster in real operations.

Diagnostics should be actionable. Good systems do not only display values; they indicate drift tendencies, maintenance needs, sample faults, abnormal operating conditions, and confidence status.

Compliance support should be built in. In regulated applications, audit trails, traceability, calibration records, and data integrity features are not optional extras.

Lifecycle cost should be transparent. Consumables, recalibration frequency, service requirements, spare part availability, and expected downtime risk should all be evaluated alongside purchase price.

Practical ways to reduce blind spots in monitoring systems

Most hidden-risk problems can be reduced through a combination of better design, better verification, and better interpretation.

Use layered verification. Do not rely on one reading alone. Cross-check critical measurements with process indicators, periodic reference tests, or redundant sensing where justified.

Strengthen preventive maintenance around the weak points. In many systems, the vulnerable areas are sampling components, seals, environmental protection, and calibration discipline rather than the analyzer engine itself.

Adopt trend-based review. Teams should analyze drift, response degradation, service frequency, and recurrent deviations over time instead of waiting for hard failure.

Upgrade alarm philosophy. Add logic for signal plausibility, rate-of-change alarms, analyzer status alarms, and maintenance alerts.

Match enclosure design to field reality. Industrial environments often require more attention to thermal control, corrosion resistance, ingress protection, and vibration management than initially expected.

Train users to question “normal.” Operators and engineers should know that a smooth number on screen is not automatic proof of a healthy process. Good training improves early escalation and avoids overtrust in the interface.

When a monitoring upgrade is justified

An upgrade is usually justified when one or more of the following conditions are present: repeated unexplained deviation between analyzer data and field reality, frequent recalibration correction, high maintenance effort in the sample system, compliance pressure, expansion into more critical process control, or decision-making that increasingly depends on analyzer data.

For management teams, the strongest justification is often not higher measurement performance alone, but reduced uncertainty. A better safety control analyzer or process monitoring analyzer creates value when it improves trust in operational decisions, lowers the chance of unnoticed deviation, and strengthens evidence for safety and compliance management.

Conclusion

If monitoring system data looks fine but risk is still being missed, the problem is rarely just “bad luck.” It is usually a gap between displayed measurement and actual process reality. Sensor drift, sampling errors, enclosure issues, weak alarm logic, and incomplete verification can all make industrial analysis equipment appear reliable while early warnings go undetected.

For operators, engineers, procurement teams, and business leaders, the right response is not to trust less data, but to demand better measurement integrity. The most effective monitoring systems are not the ones that merely stay online; they are the ones that reveal risk early enough to support safer operations, stronger compliance, and better business decisions.

Recommended for You

Search Categories

Search Categories

Latest Article

- FDA Updates IVD Import Guidance: CNAS Calibration Traceability to NIST Required for Chinese ManufacturersFDA now requires CNAS calibration traceability to NIST for Chinese IVD manufacturers — critical for 510(k)/De Novo submissions. Act now to avoid RTA delays!Posted by:

- ASEAN's Green Clearance Corridor Fully LaunchedGreen Clearance Corridor now live: CNAS calibration reports auto-verified for ASEAN customs clearance in under 4 hours — boost your China–ASEAN trade efficiency today!Posted by:

- First Global Cybersecurity Verification Guide for Industrial Instruments ReleasedFirst global cybersecurity verification guide for industrial instruments launched—featuring a 5-level maturity model & standardized pentest library. Essential for exporters, OEMs, and critical infrastructure suppliers.Posted by:

Please give us a message