Laboratory Detector Accuracy Standards You Should Check

For quality control and safety teams, verifying laboratory detector performance is not just a compliance task—it directly affects test reliability, workplace safety, and audit readiness. Before selecting or approving any laboratory detector, you should review the key accuracy standards that define detection limits, calibration stability, response consistency, and real-world measurement confidence. Understanding these checkpoints helps reduce risk, improve data credibility, and support better operational decisions.

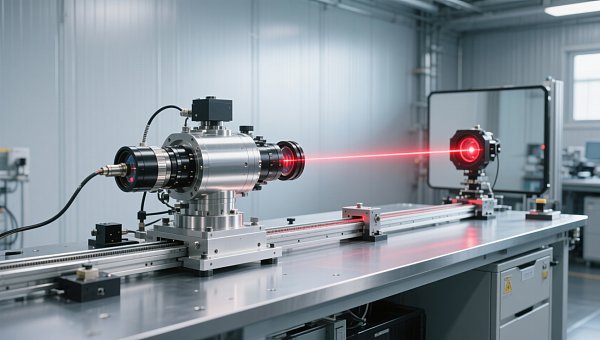

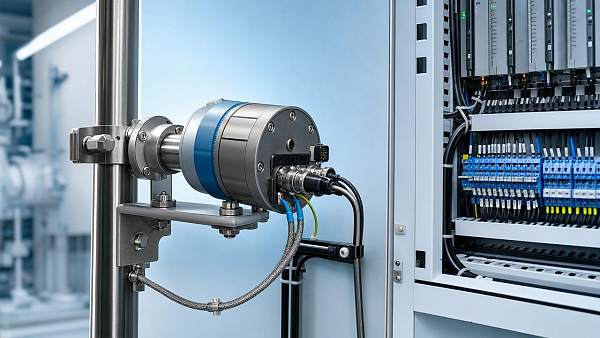

In the instrumentation industry, a laboratory detector is rarely used in isolation. It often sits inside a broader measurement chain that includes sample preparation, environmental control, calibration references, data logging, alarms, and quality documentation. That is why accuracy should be assessed as a system requirement rather than a single number on a product sheet.

For buyers in quality assurance, EHS, industrial testing, medical labs, environmental analysis, and process control support functions, the practical question is simple: which accuracy standards truly matter before approval? The answer usually involves at least 6 checkpoints—detection limit, repeatability, linearity, calibration interval stability, response time consistency, and environmental tolerance—plus documented traceability and service support.

Why Accuracy Standards Matter in Laboratory Detector Selection

A laboratory detector may be used to identify gases, particles, chemical concentrations, radiation, biological markers, or process-related contaminants. Across these applications, poor accuracy creates 3 immediate risks: false acceptance of bad material, false alarms that interrupt operations, and audit findings caused by inconsistent test records.

In regulated or semi-regulated environments, even a small shift such as ±1% to ±2% of reading can change a pass/fail decision. In safety-critical scenarios, delayed response by 10 to 30 seconds may reduce the usefulness of an alarm event. For this reason, quality and safety managers should examine both laboratory conditions and real-use conditions before approving any laboratory detector.

The Difference Between Stated Accuracy and Usable Accuracy

Many technical sheets present accuracy under ideal conditions, often at 20°C to 25°C, stable humidity, and a narrow measurement range. Usable accuracy is different. It reflects how the laboratory detector performs across normal shifts, operator changes, sample variability, and calibration intervals that may extend 30, 90, or 180 days depending on site policy.

A detector that looks excellent on paper may drift when exposed to vibration, dust, unstable power supply, or mixed analytes. For industrial laboratories tied to manufacturing, energy, water treatment, and environmental monitoring, this gap between brochure accuracy and in-field accuracy is one of the most common approval failures.

Accuracy as a Purchasing and Risk-Control Metric

From a procurement perspective, accuracy standards affect more than technical compliance. They also affect recalibration cost, spare sensor consumption, downtime frequency, and audit workload. A cheaper laboratory detector that requires monthly intervention may be less economical than a more stable unit with a 6-month or 12-month verification cycle.

- Lower retest rates and fewer disputed results

- More reliable release decisions for incoming, in-process, or final inspection

- Stronger evidence for internal audits and third-party inspections

- Better alarm confidence in hazardous or contamination-sensitive zones

The Core Accuracy Standards You Should Check First

Before approving a laboratory detector, review the core technical indicators in a structured way. The table below summarizes 6 key standards that quality control and safety teams commonly use when comparing detector options across industrial and laboratory applications.

The key conclusion is that no single value defines a good laboratory detector. A unit with excellent detection limit but poor drift stability may create more rework than a slightly less sensitive detector with stronger repeatability and lower maintenance demand.

Detection Limit and Quantitation Threshold

Detection limit tells you how low the signal can go before the detector can no longer distinguish analyte from noise. For quality and safety teams, this matters when specifications are tight or contamination events start at very low levels. If the site action threshold is 1 ppm, a laboratory detector that only performs reliably above 2 ppm may be unsuitable regardless of advertised sensitivity.

Also ask for the quantitation threshold, not just detection threshold. Being able to “see” a signal is not the same as reporting it with confidence. In practical approval work, these 2 numbers should be tied to your alarm point, release criterion, or environmental limit.

Repeatability, Reproducibility, and Operator Influence

Repeatability is usually checked with the same operator, same sample, same detector, and short time interval. Reproducibility expands the test to different operators, days, or sometimes locations. A robust laboratory detector should maintain acceptable variation in both conditions, especially when multiple technicians rotate across 2 or 3 shifts.

What to request during evaluation

- At least 5 repeated measurements at low, mid, and high points

- Operator-to-operator comparison if more than 2 users will handle the instrument

- Documented acceptance criteria, such as a defined percentage deviation or standard deviation limit

Linearity Across the Intended Range

A laboratory detector may perform well at one reference point and still fail across its full range. For example, a detector calibrated near 20% of span may drift at 80% of span or produce compressed readings near the lower end. This is especially relevant in laboratories supporting industrial process troubleshooting, where concentrations may swing significantly during abnormal events.

A practical review should include at least 3 to 5 verification points distributed across the range. This gives a more realistic picture of whether the detector supports both routine testing and exception handling.

Calibration, Drift, and Traceability Requirements

Calibration accuracy is only meaningful if it remains stable between service events. For many facilities, the hidden cost of a laboratory detector is not the initial purchase but the number of recalibrations, failed checks, out-of-tolerance findings, and undocumented adjustments that occur during a 12-month operating cycle.

How to Assess Calibration Stability

Ask how long the detector can maintain its stated accuracy under normal load. Common verification intervals are 30 days for highly sensitive use, 90 days for standard laboratory control, and 180 days or longer for stable low-drift systems. The right interval depends on analyte type, sample load, environmental stress, and internal quality policy.

Review whether the supplier distinguishes zero drift, span drift, and sensor aging. These are not interchangeable. A laboratory detector may hold zero well but lose span accuracy, leading to under-reporting at higher concentrations. For safety teams, that is a critical risk because alarms may appear “normal” during daily checks while missing true exposure levels.

Traceability and Documentation for Audits

Traceability means the detector’s calibration and verification chain can be linked to recognized reference standards through documented records. This is especially important when laboratory data support batch release, incident investigation, environmental reporting, or contractor safety controls.

At a minimum, maintain 4 types of records: calibration certificates, routine verification logs, maintenance history, and out-of-tolerance actions. A laboratory detector with strong technical performance but weak documentation can still create audit gaps.

The following table helps compare calibration and traceability factors that frequently influence approval decisions.

When calibration control is weak, even a technically advanced laboratory detector becomes difficult to defend in audits or incident reviews. Stable measurement plus traceable records is the standard that most B2B buyers should target.

Environmental and Operational Factors That Affect Real Accuracy

Accuracy does not live only inside the detector. It is influenced by ambient temperature, humidity, sample matrix effects, vibration, power quality, warm-up time, and cleaning condition. In integrated instrumentation environments, these factors often explain why one site achieves stable results while another site struggles with the same laboratory detector.

Temperature, Humidity, and Sample Matrix

Many detectors are specified within a limited environmental band, such as 15°C to 30°C or 20% to 80% relative humidity. Outside that band, sensitivity, baseline noise, or sensor response may change. If your laboratory supports outdoor sampling, utility areas, or mixed industrial workflows, this should be checked during qualification rather than after installation.

Sample matrix is another overlooked issue. A laboratory detector validated on a clean standard may behave differently with solvents, particulates, moisture, or cross-sensitive compounds. Quality teams should request interference information and verify it against their top 3 to 5 expected sample conditions.

Response Time and Alarm Confidence

For some uses, especially gas detection and safety monitoring support, response time matters almost as much as absolute accuracy. A detector with a T90 of under 15 seconds may be suitable for active monitoring, while a unit taking 45 to 60 seconds may only fit slower analytical workflows. The key is alignment between detector behavior and operational risk.

Alarm confidence also depends on consistency. If the same event produces different response times under similar conditions, operators may start distrusting the instrument. Once that happens, the laboratory detector becomes a procedural weakness rather than a control tool.

Common real-world checks before approval

- Warm-up time required before stable measurement, such as 5 to 20 minutes

- Performance after short power interruption or restart

- Behavior under expected dust or humidity exposure

- Cleaning frequency needed to maintain baseline stability

A Practical Approval Checklist for QC and Safety Teams

A good approval process should reduce ambiguity. Instead of relying on one demonstration or one certificate, use a structured checklist that combines technical verification, operational suitability, and documentation readiness. This approach is especially useful when multiple departments share decision authority.

Six Questions to Ask Before You Sign Off

- Does the laboratory detector meet the required detection threshold with enough margin for your action limit?

- Has repeatability been confirmed at low, mid, and high operating points?

- Is the stated accuracy valid across the full range or only one reference point?

- What drift is expected between calibration intervals of 30, 90, or 180 days?

- Are environmental limits compatible with your real laboratory or industrial support setting?

- Can the supplier provide service response, spare parts availability, and complete calibration records?

Common Approval Mistakes

One common mistake is approving a laboratory detector based only on nominal sensitivity. Another is overlooking consumable life, which can turn a technically strong instrument into a maintenance burden. Some teams also fail to define pass/fail criteria before the trial, making final approval subjective and difficult to defend.

A more reliable method is to run a short qualification plan over 7 to 14 days. Include repeated checks, one or two simulated upset conditions, and documentation review. This gives a better picture of day-to-day reliability than a single vendor demo.

When to Escalate to a Customized Solution

If your workflow includes mixed analytes, harsh ambient conditions, tight release thresholds, or integration with automation systems, a standard off-the-shelf laboratory detector may not be enough. In those cases, discuss customized sampling interfaces, enclosure protection, data output requirements, and maintenance planning before procurement is finalized.

This is particularly relevant in the broader instrumentation market, where laboratory analysis increasingly connects with digital monitoring, industrial online systems, and compliance reporting. Selecting a detector that fits future integration needs can reduce replacement risk over the next 2 to 5 years.

For quality control and safety managers, the right laboratory detector is not simply the most sensitive or the lowest-priced option. It is the one that delivers verified accuracy, stable calibration behavior, reliable response, and clear traceability under your actual operating conditions. If you are evaluating new detectors or upgrading an existing measurement workflow, contact us to discuss your application, request a tailored evaluation checklist, or learn more about instrumentation solutions built for dependable laboratory performance.

Recommended for You

Search Categories

Search Categories

Latest Article

- FDA Updates IVD Import Guidance: CNAS Calibration Chain Required for China-Made DevicesFDA now requires CNAS calibration chain reports for China-made IVD analyzers—pH, ion & clinical chemistry devices entering via 510(k)/De Novo. Act now to avoid U.S. port rejections.Posted by:

- EN 61000-6-4:2026 Enters Force: Stricter EMC Requirements for Industrial Field InstrumentsEN 61000-6-4:2026 enforces stricter EMC requirements for industrial field instruments—key for AI edge controllers, sensors & exporters. Act now to ensure CE-EMC compliance by Nov 2026.Posted by:

- Global Methanol Electric Alliance Launches Cross-Border Energy Efficiency Recognition RoadmapGlobal Methanol Electric Alliance launches cross-border energy efficiency recognition roadmap—key for exporters, cal labs & methanol-instrument manufacturers.Posted by:

Please give us a message