How to Evaluate a High Accuracy Detector in the Field

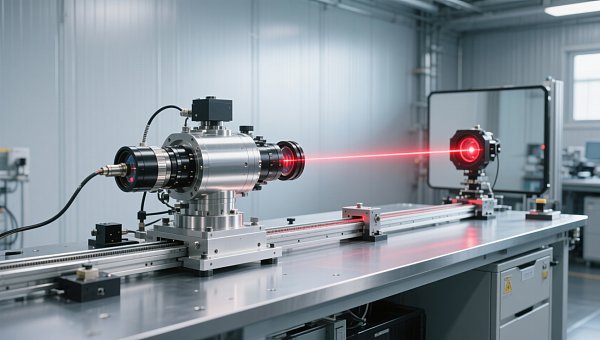

In field operations, choosing a high accuracy detector is not just about specifications on paper—it directly affects product quality, worker safety, and compliance outcomes. For quality control and safety management professionals, knowing how to assess real-world performance, stability, response speed, and environmental adaptability is essential. This guide explains the key factors to evaluate so you can select a reliable detector with confidence.

What should you verify first when evaluating a high accuracy detector?

A high accuracy detector is only valuable if its field performance matches its stated specification. In instrumentation-heavy environments such as manufacturing plants, power facilities, laboratories, environmental monitoring points, and automated production lines, the detector must maintain measurement integrity under vibration, dust, humidity, temperature variation, electromagnetic interference, and operator handling.

For quality control personnel, the main concern is whether readings stay consistent enough to support pass/fail decisions, calibration traceability, and process stability. For safety managers, the priority is whether the detector responds fast enough and accurately enough to prevent exposure, incidents, shutdowns, or non-compliance. These two goals often overlap, which is why field evaluation should go beyond catalog claims.

The first verification step is simple: define the actual measurement task. A detector used for spot checking in a warehouse is not evaluated the same way as one used for continuous industrial online monitoring, confined-space entry, laboratory screening, or process control verification.

- Identify the target substance or parameter, expected concentration or range, and acceptable error band.

- Clarify whether the detector is used for compliance, preventive safety, product quality inspection, or troubleshooting.

- Confirm whether the measurement is continuous, periodic, handheld, fixed-point, or integrated into automation control.

- Determine the environmental stresses that may change detector behavior in the field.

Without this context, buyers often select a high accuracy detector that looks impressive in the datasheet but performs poorly in the real operating window. The most common failure is not a broken instrument. It is a mismatch between instrument capability and site conditions.

Which field performance indicators matter most?

The most practical way to assess a high accuracy detector is to separate laboratory accuracy from field usability. Many instruments can achieve excellent results in stable calibration conditions. Fewer maintain dependable performance on a busy production floor, near motors and pumps, outdoors in changing weather, or across multiple shifts.

The table below summarizes the core indicators quality and safety teams should review before approval or procurement.

When reviewing these indicators, avoid relying on one-time demonstrations. A high accuracy detector should be observed over repeated tasks, preferably across multiple operators and environmental conditions. Consistency matters more than a single impressive test.

How to test repeatability on site

Repeatability is often more useful than headline accuracy for day-to-day control. If a detector provides slightly offset but highly consistent readings, the issue can often be corrected through calibration or compensation. If readings vary unpredictably, the instrument becomes risky for both quality assurance and safety response.

- Take at least three repeated measurements at the same test point using the same method.

- Repeat the test at different times of day if temperature or process load changes.

- Compare results with a calibrated reference instrument or known standard when available.

- Record operator name, ambient conditions, and sample path to identify variation sources.

How do application scenarios change detector selection?

In the instrumentation industry, a high accuracy detector may support industrial manufacturing, energy and power systems, environmental inspection, laboratory analysis, medical testing support, construction engineering, and automated monitoring networks. Each scenario creates a different evaluation standard.

The table below helps connect field conditions with practical selection logic instead of generic specification comparison.

This comparison shows why detector evaluation should be scenario-driven. A detector optimized for metrology support may be too delicate for construction commissioning. A rugged safety unit may be excellent for alarm response but not ideal for tight laboratory tolerances.

Typical pain points by user role

- Quality control teams often struggle with inconsistent readings between shifts, poor traceability, and unclear recalibration schedules.

- Safety managers often face alarm credibility issues, harsh environments, and pressure to meet compliance deadlines with limited downtime.

- Procurement teams often compare only purchase price and nominal accuracy, while ignoring lifecycle cost and field suitability.

What technical details are often overlooked during procurement?

A high accuracy detector should not be judged by accuracy percentage alone. In many projects, the real difference between acceptable and poor performance comes from secondary parameters that affect how the instrument behaves outside the calibration bench.

Key technical factors beyond basic accuracy

- Measurement range and resolution: high accuracy at one narrow point does not guarantee useful performance across the full operating span.

- Warm-up and stabilization time: field teams need to know how long the detector takes before readings can be trusted.

- Calibration method and interval: difficult or frequent calibration raises labor cost and increases risk of skipped maintenance.

- Sampling system design: tubing length, pump response, filters, and sample conditioning can change the effective response time.

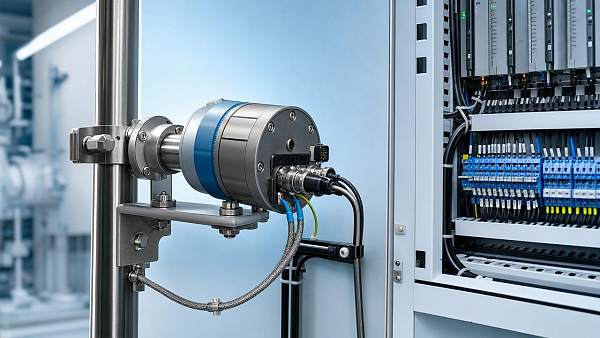

- Data output and integration: modern instrumentation often needs digital communication, logging, alarms, or interface compatibility with automation systems.

These details are especially important in industries moving toward digital transformation and intelligent upgrading. A detector that cannot integrate smoothly with data collection, remote supervision, or control workflows may create manual workarounds and hidden risk, even if its core sensing element performs well.

Checklist for a short field trial

- Inspect startup behavior, self-check prompts, and operator guidance.

- Verify readings against a reference under at least two environmental conditions.

- Test alarm, response delay, and signal recovery after exposure ends.

- Review calibration access, maintenance steps, and replacement parts logic.

- Confirm data export, record retention, and compatibility with site documentation practices.

How can you compare options without focusing only on price?

Budget pressure is real, but the lowest purchase price rarely delivers the lowest operating cost. When a high accuracy detector is used for safety or critical quality checks, false alarms, sensor drift, delayed readings, and frequent maintenance can cost more than the instrument itself.

Use a lifecycle view during comparison. The matrix below can help procurement, QA, and EHS teams align their decision.

This does not mean the more expensive high accuracy detector is always the right answer. It means the decision should be tied to risk exposure, verification workload, and the consequence of wrong readings. In low-risk periodic checks, a simpler instrument may be sufficient. In high-consequence operations, reliability usually justifies a stronger specification.

Which standards, documentation, and compliance points should be reviewed?

For quality control and safety management, documentation is part of performance. A high accuracy detector should be supported by clear calibration guidance, traceability information where applicable, operating instructions, maintenance requirements, and relevant conformity or safety documentation required by the target market or application.

- Check whether calibration procedures are clearly defined and practical for your team or service partner.

- Review whether the detector’s environmental limits match your installation location and duty cycle.

- Confirm required market access or safety compliance documents based on country, industry, and operating hazard.

- Ask how measurement records, alarms, and maintenance logs can be stored for audits and investigations.

In regulated or audited settings, the absence of clear documentation can turn an otherwise capable detector into a weak link. This is especially true for environmental reporting, laboratory support, and industrial safety programs where traceable decisions are required.

Common mistakes when evaluating a high accuracy detector

Mistake 1: treating laboratory accuracy as field accuracy

A detector can perform well in ideal conditions and still fail under vibration, unstable temperature, contaminated sampling paths, or mixed gases. Always test under realistic site conditions.

Mistake 2: ignoring operator workflow

If menu navigation, calibration steps, or alarm interpretation are unclear, field errors increase. Ease of use is not cosmetic. It directly affects reading quality and response quality.

Mistake 3: buying for the current task only

Instrumentation investments should also consider future automation, digital reporting, and broader monitoring needs. A detector that cannot scale may force early replacement.

Mistake 4: underestimating service and spare parts

A strong field detector still needs consumables, calibration support, and predictable lead times. Delayed service can interrupt safety routines and production schedules.

FAQ: practical questions from quality and safety teams

How do I know whether a high accuracy detector is suitable for harsh outdoor use?

Review operating temperature range, enclosure protection, resistance to moisture and dust, and stability under changing ambient conditions. Then validate those claims in a field trial. Outdoor suitability is not just about surviving exposure. It is about maintaining dependable readings during exposure.

What is more important: fast response or high accuracy?

It depends on the task. For emergency detection and worker protection, response speed may be the first priority as long as accuracy stays within acceptable limits. For product verification, laboratory support, or process optimization, repeatable accuracy often carries more weight. Many sites need both, so define the consequence of delay versus the consequence of measurement error.

How long should a field evaluation last?

A useful trial should cover enough operating variation to reveal drift, response behavior, and operator issues. For stable indoor processes, a few days may be enough. For outdoor or shift-based operations, longer observation often provides a more realistic picture. The key is to include normal disturbance factors, not just ideal moments.

Can one high accuracy detector cover multiple departments?

Sometimes, but only if the measurement range, environmental tolerance, data handling, and compliance requirements align. A shared device can reduce cost, yet it may create scheduling conflicts, wear, and inconsistent setup practices. Multi-department use should be planned, not assumed.

Why contact us for detector selection and field evaluation support?

Selecting a high accuracy detector for modern instrumentation applications requires more than comparing brochure values. It involves matching sensing performance, environmental resilience, calibration logic, workflow fit, and compliance expectations across manufacturing, energy, environmental monitoring, laboratory support, construction, and automation scenarios.

We can support your evaluation process with practical discussions focused on the questions that matter most to quality control and safety management teams.

- Parameter confirmation for range, sensitivity, repeatability, response time, and environmental limits

- Product selection guidance based on process conditions, usage frequency, and risk level

- Delivery timeline discussion for urgent projects, shutdown windows, or phased implementation

- Custom solution communication for integration, monitoring points, or special operating conditions

- Certification and documentation review based on your market or application requirements

- Sample support or trial planning where a practical field assessment is needed before purchase

- Quotation discussion built around total ownership factors rather than upfront price alone

If you are comparing detector options for a new project or replacing an unreliable unit, contact us with your target parameter, operating environment, accuracy expectation, and compliance needs. That allows a faster and more relevant recommendation instead of a generic product list.

Recommended for You

Search Categories

Search Categories

Latest Article

- FDA Updates IVD Import Guidance: CNAS Calibration Chain Required for China-Made DevicesFDA now requires CNAS calibration chain reports for China-made IVD analyzers—pH, ion & clinical chemistry devices entering via 510(k)/De Novo. Act now to avoid U.S. port rejections.Posted by:

- EN 61000-6-4:2026 Enters Force: Stricter EMC Requirements for Industrial Field InstrumentsEN 61000-6-4:2026 enforces stricter EMC requirements for industrial field instruments—key for AI edge controllers, sensors & exporters. Act now to ensure CE-EMC compliance by Nov 2026.Posted by:

- Global Methanol Electric Alliance Launches Cross-Border Energy Efficiency Recognition RoadmapGlobal Methanol Electric Alliance launches cross-border energy efficiency recognition roadmap—key for exporters, cal labs & methanol-instrument manufacturers.Posted by:

Please give us a message