Fixed Sensor vs Portable Checks: Where Results Often Diverge

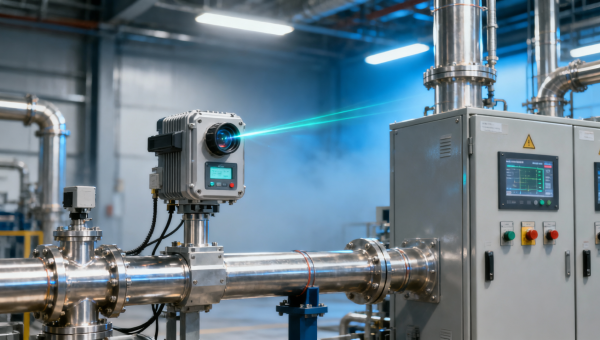

When comparing a fixed sensor with a portable sensor, results can diverge more often than many teams expect. From oxygen detector setups using a paramagnetic detector, electrochemical detector, or infrared detector to laboratory sensor, control sensor, and monitoring sensor applications, accuracy depends on context, calibration, and use conditions. This article explains where these gaps come from and how a high accuracy sensor strategy supports safer, smarter decisions.

For operators, quality managers, project engineers, procurement teams, and financial approvers, the real question is not whether one device type is better in every case. The practical issue is where measurement differences appear, how large they can become, and what that means for compliance, process control, worker safety, and maintenance cost.

In instrumentation-intensive environments such as industrial manufacturing, energy systems, environmental monitoring, laboratories, and automated process lines, a difference of 0.5% to 2% can be acceptable in one task and unacceptable in another. Understanding the source of divergence helps teams choose the right sensing architecture instead of relying on assumptions.

Why Fixed and Portable Sensor Results Do Not Always Match

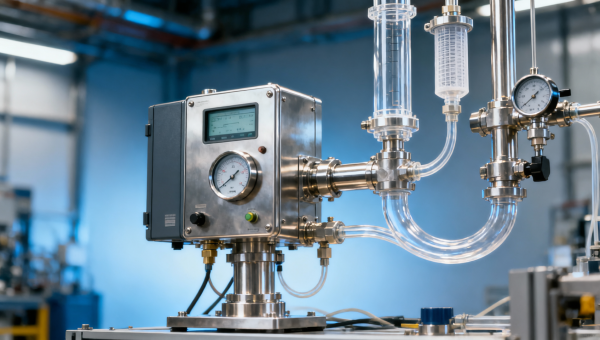

A fixed sensor is installed at a defined point and is designed to provide continuous or near-continuous data, often 24/7. A portable sensor is moved by an operator and used for spot checks, route inspections, confined space entry, troubleshooting, or temporary verification. Because they do not measure under the same spatial and timing conditions, divergence is often built into the process before calibration is even considered.

Location is one of the most common reasons. A fixed oxygen detector mounted at 1.5 m height near a process skid may report different values from a portable unit used 3 m away, closer to a vent point or floor pocket. In gas monitoring, vertical stratification, airflow, temperature gradient, and localized leakage can change readings within seconds and across distances of less than 2 m.

Time also matters. A fixed monitoring sensor may average, filter, or transmit values every 1 to 60 seconds depending on the control system design. A portable detector may display a more immediate value or a short-term peak. If a transient event lasts 8 seconds, one instrument may capture a peak while another only records a smoothed average.

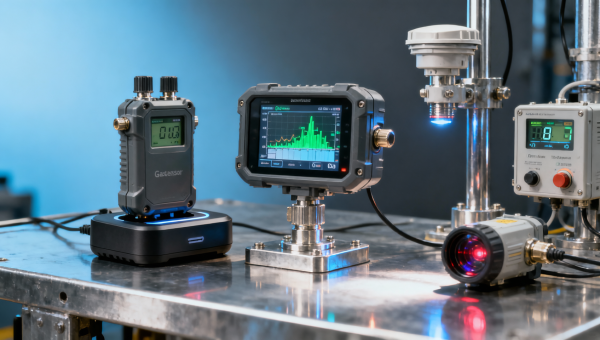

Sensor technology further affects results. A paramagnetic detector, electrochemical detector, and infrared detector do not respond identically to pressure variation, humidity, cross-sensitivity, or gas composition changes. In laboratories and industrial online monitoring, these technology differences can explain why two instruments that are both “within spec” still disagree in actual use.

Common divergence mechanisms in field use

- Sampling point mismatch: measurements taken 0.5 m to 5 m apart can represent different process conditions.

- Response time mismatch: T90 response may range from under 15 seconds to over 45 seconds depending on technology and housing.

- Environmental variation: temperature shifts of 10°C to 20°C and humidity swings can change sensitivity.

- Maintenance status: a sensor overdue for calibration by 30 to 90 days can drift enough to affect decisions.

- Human handling: pump flow rate, probe insertion depth, and stabilization time can alter portable readings.

The key operational lesson is simple: different results do not always mean one instrument is faulty. More often, they indicate that the measurement objective, sensor principle, installation condition, or user procedure is different. Teams that document these variables reduce false alarms, unnecessary replacements, and avoidable process stoppages.

Technology, Calibration, and Sampling Conditions That Create Gaps

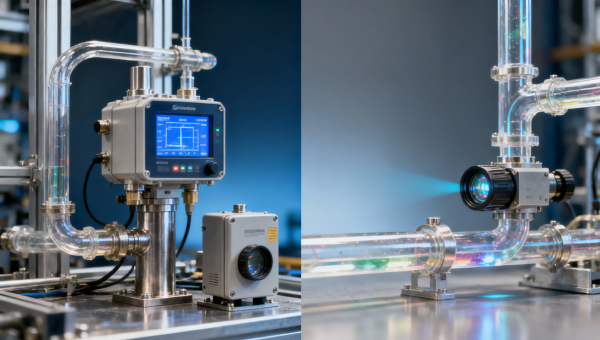

In the instrumentation industry, sensor divergence often comes from a combination of measurement principle and field condition rather than from a single defect. Fixed systems are usually integrated into SCADA, PLC, DCS, or BMS environments and may include signal conditioning, compensation logic, and alarm thresholds. Portable systems prioritize flexibility, rapid deployment, and user access. Those design goals influence how each device behaves in practical work.

Calibration intervals are a major factor. A fixed online sensor might be bump tested weekly and fully calibrated every 30, 90, or 180 days depending on risk category and site policy. A portable detector may be used across multiple zones and subjected to daily checks, but actual calibration discipline can vary between teams and shifts. When one device is maintained rigorously and the other is not, comparison loses value.

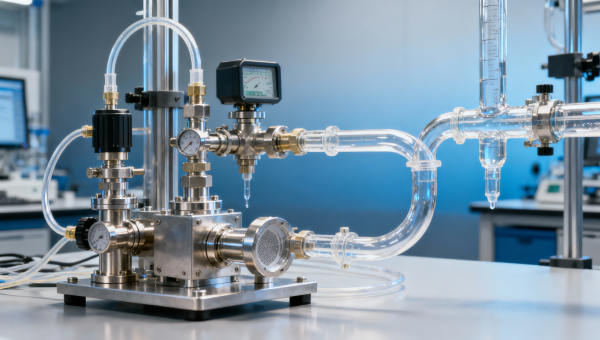

Sampling method can be even more decisive than calibration. A diffusion-based fixed detector reacts to ambient conditions at a mounted point, while a portable unit with a pump draws gas through tubing that may be 1 m to 10 m long. Tube material, pump rate, dead volume, filter condition, and purge time all influence the final reading. In liquid or laboratory sampling, similar issues arise with flow cell design, sample lag, and contamination risk.

The table below shows where divergence frequently appears across common instrumentation scenarios, including oxygen monitoring, laboratory analysis, and process control environments.

The comparison makes it clear that matching a single reading is not enough. Teams should compare measurement purpose, installation geometry, sensor principle, and maintenance status at the same time. In many plants, once these four items are aligned, unexplained divergence drops significantly.

How sensor principle affects agreement

Paramagnetic oxygen detector

This type is valued for strong stability and is often used in continuous oxygen analysis. It performs well in controlled sampling systems, but pressure fluctuation, vibration, and sample conditioning quality can influence results if installation design is weak.

Electrochemical detector

Widely used in portable safety devices and many fixed gas monitors, electrochemical cells are sensitive, practical, and cost-effective. However, lifetime often falls in the 12 to 36 month range depending on exposure, and cross-sensitivity or environmental stress can shift performance over time.

Infrared detector

Infrared sensing is common for certain gases and process applications where selectivity and non-consumptive operation matter. Still, optics contamination, condensation, and sample path issues can create offsets between a fixed process analyzer and a portable verification device.

How to Judge Which Result Is More Reliable in a Real Application

In practice, the more reliable result is the one that best matches the intended decision. If the task is continuous alarm protection near a leak source, a fixed sensor installed according to hazard mapping may be the primary reference. If the task is verifying atmosphere inside a tank before entry, a portable check taken at top, middle, and bottom levels may be more relevant than the wall-mounted detector outside.

Reliability should therefore be assessed across at least 5 dimensions: measurement objective, location relevance, calibration status, environmental fit, and operator method. Procurement teams often focus on specification sheets alone, but technical evaluators know that performance on paper does not guarantee agreement in the field.

A useful approach is to create an acceptance band based on application criticality. For a non-critical trend measurement, a difference of ±1% full scale may be acceptable. For oxygen safety monitoring near a threshold, tighter criteria or procedural escalation may be required. The point is to define comparison rules before a discrepancy becomes a dispute.

The following checklist helps cross-functional teams decide whether divergence points to normal operating variation or to a true sensor problem.

- Confirm both devices measured the same medium at nearly the same time, ideally within 30 to 60 seconds.

- Check whether the sample point, height, and airflow condition were equivalent.

- Review calibration date, bump test history, and last maintenance record for both units.

- Compare response time, filtering logic, and alarm delay settings.

- Verify tubing, filters, pumps, and accessories on the portable setup.

- Repeat the test at least 3 times if process conditions are unstable or transient.

If two instruments still diverge after these checks, a controlled verification against a reference standard or known calibration gas is the next step. In laboratories, this may involve traceable calibration routines. In industrial environments, it may mean using certified test gas, controlled flow, and documented stabilization times such as 60 to 180 seconds.

Decision priorities by stakeholder

Operators generally need speed, simplicity, and alarm confidence. Quality and safety managers care more about repeatability, audit trail, and defensible thresholds. Procurement and finance teams look at total cost over 2 to 5 years, including calibration labor, sensor replacement, downtime exposure, and service support. A sound evaluation process aligns these priorities rather than treating them as separate decisions.

Selection and Procurement: Building a High Accuracy Sensor Strategy

A high accuracy sensor strategy does not mean buying only the highest-spec instrument in every category. It means choosing the right mix of fixed monitoring sensors, portable detectors, laboratory sensors, and control sensors according to risk, process stability, and response needs. Many facilities perform best with a layered approach rather than a single-device mindset.

For example, a plant may use fixed oxygen or gas detection for continuous area protection, portable instruments for route inspections and confined spaces, and laboratory analysis for confirmation or quality control. These three layers serve different time scales and decision points. When procurement treats them as substitutes instead of complements, measurement conflict becomes more likely.

The table below provides a practical comparison framework for buyers, engineers, distributors, and project managers reviewing instrumentation options.

The main conclusion is that procurement should compare systems based on decision role, not just instrument type. A lower initial purchase price can lead to higher operating cost if training, calibration control, and verification workflow are weak. Likewise, a sophisticated fixed network can underperform if sensor placement and service access are not considered during project design.

Four purchasing criteria that reduce divergence risk

- Specify the intended measurement task clearly: alarm, trend, control, compliance, or confirmation.

- Match sensor technology to medium, interference risk, and required response time.

- Review maintenance cycle, spare availability, and calibration gas logistics before purchase.

- Require commissioning, user training, and periodic verification procedures in the supply scope.

For distributors and agents, this strategy also improves customer retention. End users are less likely to question product value when expectations on fixed-versus-portable agreement are set correctly during technical consultation and project handover.

Implementation, Verification, and Maintenance Practices That Improve Agreement

Once equipment is selected, implementation quality becomes the deciding factor. Even a high accuracy sensor can produce poor agreement if installation, commissioning, and maintenance are inconsistent. In most industrial and laboratory settings, the first 30 days after deployment are the best time to establish baseline comparison routines between fixed systems and portable checks.

A practical implementation workflow usually includes 5 steps: hazard or process mapping, sensor placement review, calibration setup, operator training, and comparative verification. Each step should have a documented owner. Project managers benefit when these tasks are defined before FAT, SAT, or handover, not after readings begin to conflict.

Maintenance discipline is equally important. Fixed sensors often need inspection for blockage, contamination, vibration effects, wiring integrity, and enclosure condition. Portable devices need attention to pump health, filter replacement, battery condition, hose cleanliness, and docking records. Missing just one of these points can create a false comparison problem.

Below is a useful service-oriented maintenance guide that teams can adapt for instrumentation fleets across industrial, environmental, and laboratory applications.

The maintenance pattern above supports not only accuracy, but also budgeting and service planning. Finance approvers often respond well to this approach because it converts uncertain troubleshooting cost into scheduled preventive work with clearer labor and spare-part expectations.

Frequent implementation mistakes

Comparing unlike measurement points

Teams sometimes compare a fixed wall-mounted detector with a portable probe inserted into a vessel, trench, or vent plume. The values are not supposed to match exactly because the environments are different.

Ignoring stabilization time

Portable users may read the screen too quickly. Depending on tubing length and gas type, a waiting period of 20 to 90 seconds may be necessary before recording a stable result.

Treating calibration as the only variable

Calibration is critical, but divergence can still come from airflow, sample line condition, mounting height, process fluctuation, or software averaging. Good troubleshooting checks all of these factors together.

FAQ for Engineers, Buyers, and Safety Teams

How much difference between a fixed sensor and a portable check is normal?

There is no single universal number because normal variation depends on sensor type, process stability, gas distribution, and the decision being made. In a controlled comparison at the same point and time, a small deviation may be expected. In real field conditions, larger differences can still be normal if location, response time, or sampling method differs.

When should a portable detector be treated as the primary reading?

A portable detector should take priority when the task is localized safety verification, temporary investigation, or confined space assessment where the fixed system does not monitor the exact internal atmosphere. It is especially relevant when readings must be taken at multiple heights or points within a space before work begins.

What should procurement ask suppliers before buying a mixed sensor setup?

Ask about calibration interval, response time, environmental limits, spare sensor availability, recommended bump test practice, integration interfaces, accessory requirements, and commissioning support. Also ask how the supplier recommends comparing fixed and portable readings in the same site, because that directly affects future troubleshooting.

How often should comparison checks be performed?

For stable applications, many teams perform periodic comparison after installation, after maintenance, and on a monthly or quarterly basis. For critical safety, high-drift environments, or newly commissioned systems, a higher frequency in the first 4 to 12 weeks may be justified until baseline behavior is well understood.

Fixed sensor and portable check results often diverge because they answer different measurement questions under different conditions. The most effective strategy is not to force identical readings, but to define the right role for each device, align calibration and sampling practices, and build verification routines into daily operations. For manufacturers, laboratories, utilities, environmental teams, and engineering projects, this approach improves safety, strengthens data confidence, and supports more efficient procurement decisions.

If you are evaluating oxygen detectors, laboratory sensors, control sensors, or industrial monitoring sensor solutions, now is a good time to review your current comparison method, maintenance schedule, and installation logic. Contact us to discuss your application, get a tailored sensor selection strategy, or explore more instrumentation solutions for reliable fixed and portable measurement alignment.

Recommended for You

Search Categories

Search Categories

Latest Article

- Control Sensor Delays Can Undermine Process StabilityControl sensor delays can destabilize operations. Compare paramagnetic detector, electrochemical detector, infrared detector, and oxygen detector options to find a high accuracy sensor for fixed, portable, laboratory, and monitoring sensor needs.Posted by:

- Laboratory Sensor Repeatability Problems Often Start With SamplingLaboratory sensor repeatability issues often begin with sampling. Compare paramagnetic detector, electrochemical detector, infrared detector, and oxygen detector solutions to improve accuracy.Posted by:

- Laboratory Sensor Selection Gets Harder With Lower Detection LimitsLaboratory sensor selection gets harder at lower limits. Compare paramagnetic detector, electrochemical detector, infrared detector, oxygen detector, fixed and portable sensor options for high accuracy monitoring and control.Posted by:

Please give us a message