Laboratory Sensor Repeatability Problems Often Start With Sampling

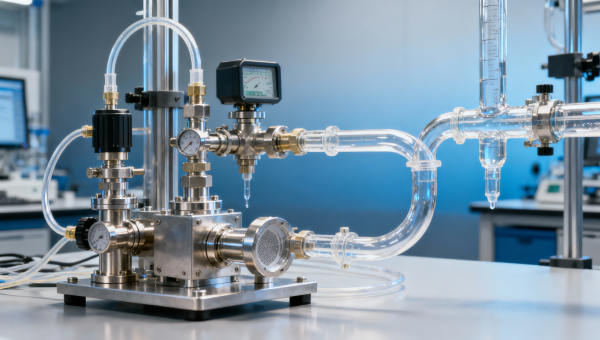

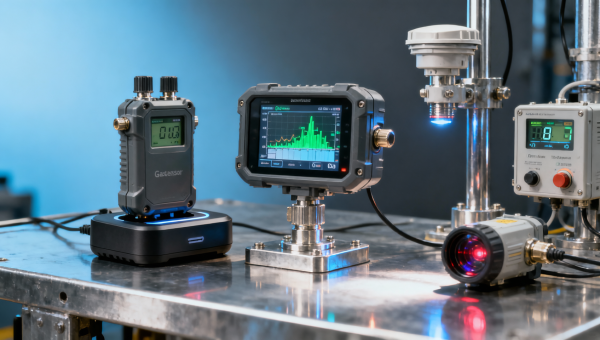

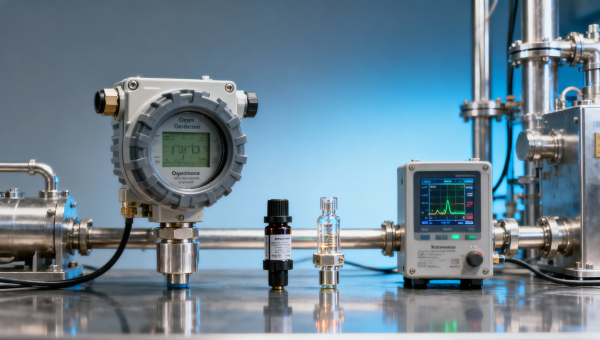

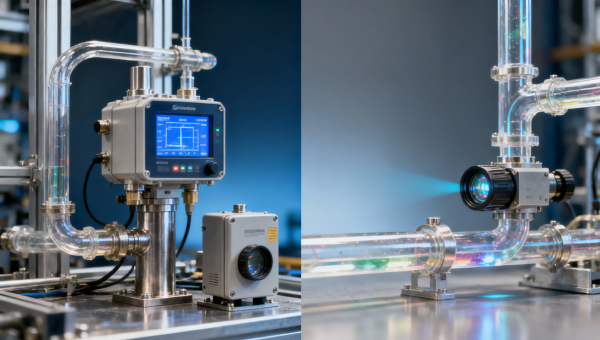

When a laboratory sensor shows poor repeatability, the root cause often lies in sampling rather than the high accuracy sensor itself. Whether you use a paramagnetic detector, electrochemical detector, infrared detector, oxygen detector, fixed sensor, portable sensor, control sensor, or monitoring sensor, unstable sample handling can distort results, increase risk, and raise operating costs. Understanding this link helps users, evaluators, and buyers make more reliable decisions.

In laboratory analysis, repeatability is often treated as a sensor specification issue, yet many unstable readings begin before the sample ever reaches the sensing element. Across industrial manufacturing, environmental monitoring, energy systems, medical testing support, and process control, poor sampling can create concentration swings, temperature shifts, pressure variations, moisture interference, and contamination that no high-performance instrument can fully correct.

For operators, this means extra recalibration, retesting, and troubleshooting. For technical evaluators, it complicates instrument comparison and acceptance testing. For procurement teams and financial approvers, it can lead to buying the wrong upgrade, increasing total cost of ownership by 10% to 30% over a typical service cycle. For quality and safety teams, unstable sampling can mask real process deviations or trigger false alarms.

The practical lesson is simple: if repeatability is weak, start by reviewing the sampling path, conditioning method, flow stability, line materials, and operating routine. In many cases, improving those factors delivers better results faster than replacing the laboratory sensor itself.

Why Sampling Is Often the First Source of Repeatability Error

A laboratory sensor can only measure the sample condition presented to it. If the sample entering the detector is inconsistent from one test cycle to the next, repeatability will suffer even when the sensor has a tight factory specification such as ±1% of reading, ±0.1% volume, or a low drift rate over 24 hours. The real issue is that many laboratories and industrial testing stations focus on detector accuracy while underestimating sample transport behavior.

Typical sampling-related errors include pressure pulsation, dead volume, leaks, adsorption on tubing walls, condensation, particulate carryover, and unstable flow. A difference of only 5% to 10% in sample flow, or a few seconds of extra lag time caused by tubing length, may change the reading enough to make operators suspect the sensor. This is especially common in oxygen analysis, infrared gas measurement, and electrochemical testing where response conditions strongly influence output stability.

In multi-shift operations, repeatability problems also arise from inconsistent sampling routines. One operator may purge for 30 seconds, another for 2 minutes. One may use a clean dry line, another may connect through a hose previously exposed to solvent vapor or moisture. Such variation can easily exceed the sensor’s intrinsic uncertainty and produce poor batch-to-batch consistency.

The issue is not limited to one instrument category. Paramagnetic detectors may be affected by pressure and flow changes. Electrochemical detectors can be influenced by sample contamination and overexposure. Infrared detectors are sensitive to optical path contamination, moisture, and background gas effects. Fixed and portable sensors alike depend on how representative and stable the sample actually is.

Common mechanisms behind unstable results

- Unsteady sample flow, often outside the recommended range such as 0.5 L/min to 2 L/min for many gas analysis setups.

- Sample temperature drifting by 3°C to 10°C between runs, changing vapor behavior and sensor response.

- Condensation forming when wet gas cools below its dew point in transfer lines or conditioning chambers.

- Carryover from previous samples due to dead legs, porous tubing, or delayed purge procedures.

- Pressure mismatch between calibration gas, ambient conditions, and actual sample introduction.

These factors matter because repeatability is not only a function of sensor design; it is the combined outcome of sampling, conditioning, instrument setup, and operator discipline. That is why root cause analysis should begin upstream, not only at the detector face.

Key Sampling Variables That Influence Laboratory Sensor Performance

A stable laboratory sensor reading depends on at least five controllable sampling variables: flow, pressure, temperature, cleanliness, and timing. Each one can introduce bias or scatter when not kept within a repeatable range. In many applications, improving these five variables reduces test variability more effectively than moving to a more expensive detector class.

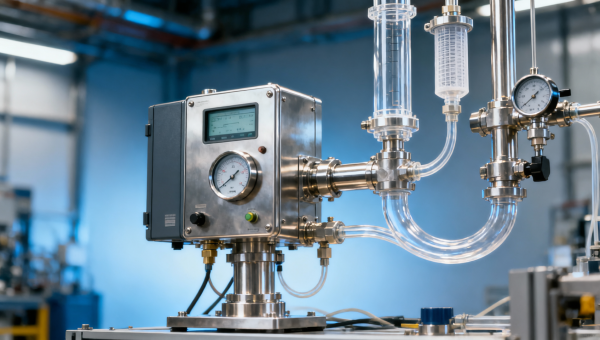

Flow is one of the most overlooked variables. If the sample flow is too low, the sensor may respond slowly or incompletely. If it is too high, pressure effects or cell loading may occur. In practical B2B settings, maintaining flow variation within ±2% to ±5% from run to run is often a better target than simply meeting a broad nominal flow recommendation. Flow restrictors, needle valves, and rotameters should be selected for repeatability, not just rough adjustment.

Temperature control is equally important. A sample entering at 18°C in the morning and 27°C in the afternoon may behave differently, particularly if it contains moisture or volatile compounds. Heated lines, insulated tubing, or a conditioning block can keep the sample within a controlled band such as 20°C to 25°C or above the dew point by at least 5°C. This is a common requirement in gas analysis tied to industrial combustion, emissions monitoring, or laboratory verification.

Material compatibility also affects repeatability. Some gases or vapors interact with tubing, seals, or filter materials. For example, moisture, oxygen, solvents, sulfur-bearing compounds, or reactive traces may adsorb or desorb over time. That creates memory effects where the first 1 to 3 readings after a sample change are unstable. Using suitable stainless steel, PTFE, or inert internal surfaces can reduce this effect depending on application chemistry.

Sampling variables and practical impact

The table below summarizes how common sampling variables affect repeatability in laboratory and industrial analysis environments.

The main takeaway is that repeatability is usually a system parameter, not a standalone sensor parameter. If these variables are not managed, even a premium instrument may deliver inconsistent results and create avoidable maintenance expense.

Timing and purge discipline

Timing is another critical variable. A purge period of 20 seconds may be sufficient for one line volume, but inadequate for another with longer tubing or filter assemblies. A practical rule is to verify at least 3 to 5 full line volume exchanges before recording data. In high-sensitivity analysis, operators may need a stabilization hold time of 60 to 180 seconds after the displayed value appears steady.

For project managers and quality teams, these timing rules should be written into SOPs and operator training records. Repeatability often improves when procedures become measurable, auditable, and shift-independent.

How Different Detector Types Respond to Sampling Problems

Different detector technologies fail in different ways when sampling is unstable. Understanding those differences helps technical evaluators avoid a common procurement mistake: changing sensor technology before fixing the sample path. In many cases, the same unstable sampling setup can make several detector types appear unreliable, even though the real weakness is shared upstream infrastructure.

A paramagnetic detector used for oxygen analysis often requires stable flow and pressure conditions. If the sample is pulsating or if calibration gas is delivered under different pressure conditions than the process sample, repeatability may degrade noticeably. Electrochemical detectors are especially vulnerable to contamination, cross-sensitivity, and exposure history. If purge quality is poor or the sample line retains previous gas, the sensor may recover slowly and show inconsistent readings during repeated tests.

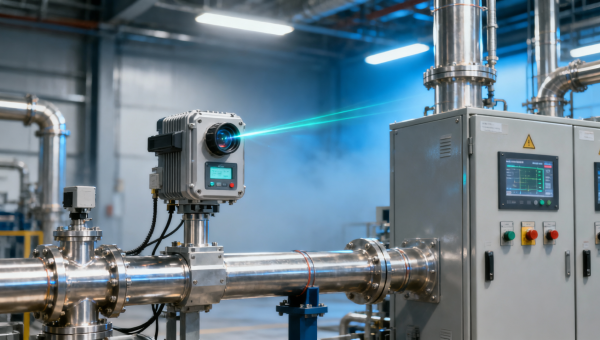

Infrared detectors are influenced by moisture, particulates, and background gas composition. Even a small amount of condensation on optical surfaces or in the sampling cell can cause drift and apparent loss of repeatability. Portable sensors face additional challenges because ambient conditions, tubing connection quality, and operator handling vary more widely than in fixed bench systems. Fixed sensors, in contrast, often benefit from more stable plumbing but may suffer from neglected filters or unnoticed line aging over 6 to 24 months of operation.

Control sensors and monitoring sensors in automated systems can be particularly affected by intermittent sample demand, cycling valves, or process fluctuations. When the sampling arrangement is designed only for average conditions rather than dynamic conditions, repeatability drops during transients. This matters for users who rely on trend data, alarm thresholds, or acceptance criteria rather than one-time spot checks.

Detector behavior comparison

The following comparison helps match troubleshooting priorities with detector type.

For buyers and distributors, this table highlights an important point: performance claims should always be reviewed together with sampling requirements. Asking for detector accuracy without asking for acceptable sample flow, pressure, dew point, or conditioning recommendations leaves a major technical gap in the purchase evaluation process.

A practical acceptance-test reminder

When comparing instruments, run at least 5 to 10 repeat measurements under the same controlled sampling conditions before judging the detector. If test conditions are not standardized, the comparison may reward the instrument that is simply more tolerant of poor sampling, not the one that is more accurate for the intended application.

A Step-by-Step Troubleshooting and Improvement Process

The most effective way to solve laboratory sensor repeatability problems is to troubleshoot the sampling system in a defined sequence. Jumping directly to recalibration or sensor replacement may solve nothing if the true issue is moisture, lag time, leakage, or unstable inlet conditions. A structured process also makes cross-functional communication easier among operators, engineers, quality staff, and purchasing teams.

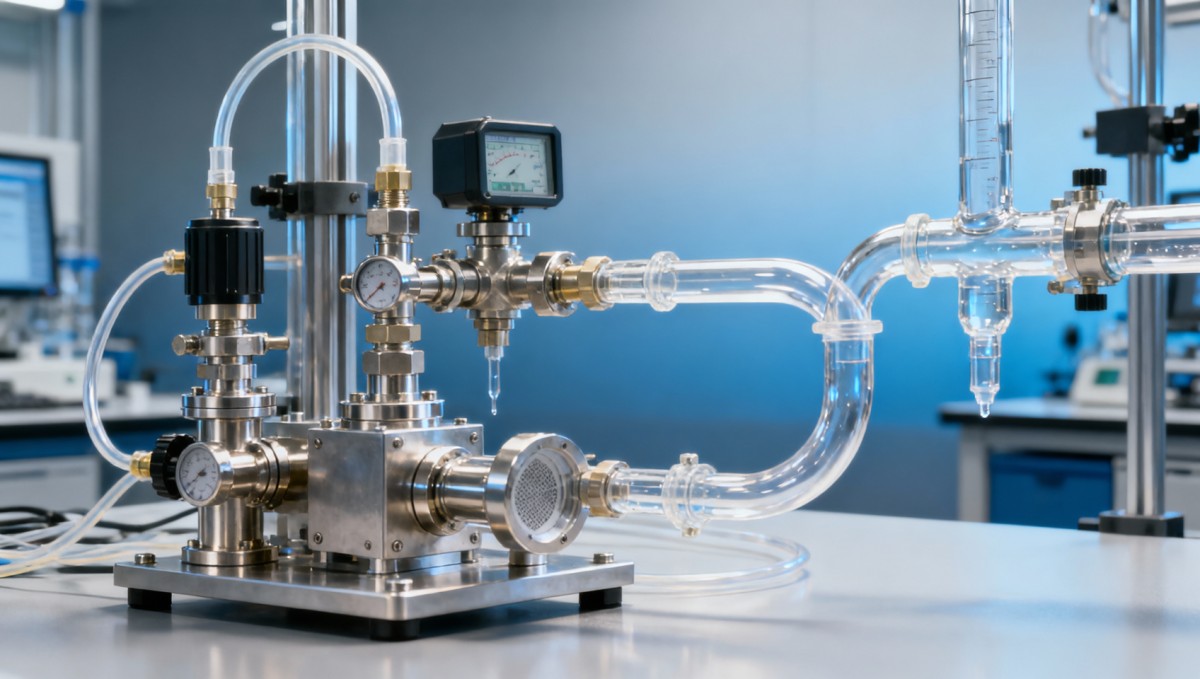

Start by documenting the full sample path from source to detector. This includes tubing length, internal diameter, filter stages, regulators, valves, pumps, traps, and vent conditions. Even one undocumented fitting change can alter response behavior. In many facilities, a line that grew from 1 meter to 4 meters through ad hoc modifications shows slower stabilization and worse repeatability without anyone noticing the root cause.

Next, verify the three basic operating conditions: stable flow, matched pressure, and controlled temperature. Then confirm purge time, line cleanliness, and calibration method. Finally, review the sensor itself. This order often reduces troubleshooting time by 20% to 40% because it addresses the highest-probability causes first.

Five-step troubleshooting workflow

- Check for leaks, loose fittings, blocked filters, and damaged seals. A simple pressure hold or leak test should be part of routine monthly inspection.

- Measure actual sample flow and compare it with the instrument’s recommended operating range. Do not rely only on setpoint assumptions.

- Review temperature and moisture management. If condensation is possible, maintain the line above dew point or add conditioning before the sensor.

- Standardize purge and stabilization time. Record results only after the reading remains stable for a defined interval such as 30 to 60 seconds.

- Recheck calibration using the same sampling path or a path that reproduces actual operating pressure and flow as closely as possible.

This method is especially useful for project managers responsible for commissioning, laboratory supervisors managing several benches, and distributors helping end users diagnose field complaints. It avoids unnecessary replacement cycles and supports a more data-based service discussion.

Signs the sampling system needs redesign, not adjustment

- Stabilization time regularly exceeds 3 minutes despite correct calibration.

- Readings differ by more than the acceptable process tolerance, for example ±0.2% vol or ±2% of reading, across repeated tests.

- Filter replacement frequency is unusually high, such as weekly instead of monthly.

- Moisture appears repeatedly in lines or traps under normal operation.

- Portable and fixed systems give different values from the same source because sample handling differs.

If these symptoms are present, redesign may be more economical than repeated maintenance. A better sampling panel, shorter line routing, improved material selection, or a dedicated conditioning stage can lower total lifecycle cost while improving trust in laboratory data.

What Buyers, Evaluators, and Project Teams Should Check Before Purchase

A procurement decision should not focus only on detector type, stated accuracy, or unit price. In instrumentation projects, repeatability in real use depends on the complete measurement chain. That means technical evaluators and purchasing teams should ask whether the proposed sensor will be installed with the right sampling arrangement, not merely whether the sensor data sheet looks strong.

For many B2B buyers, the best evaluation model combines 4 dimensions: analytical suitability, sampling compatibility, maintenance burden, and lifecycle cost. A lower-priced instrument may require more frequent filter changes, longer operator time per test, or additional sample conditioning hardware. Over 12 to 36 months, those indirect costs can exceed the initial savings.

Finance approvers also benefit from this broader view. Repeat tests, product release delays, wasted calibration gas, and technician labor all carry cost. If a poor sampling design causes just 15 extra minutes per shift across 2 shifts per day, the annual labor impact can become significant. The more demanding the quality environment, the more valuable stable repeatability becomes.

Pre-purchase evaluation checklist

The table below helps align technical and commercial review before selecting a laboratory sensor or analyzer package.

This checklist helps users and distributors speak the same language during quotation, technical clarification, installation, and after-sales support. It also reduces the risk of approving a sensor that looks correct on paper but performs poorly in the actual sampling environment.

Questions worth asking suppliers

- What sample flow and pressure range is needed for reliable repeatability, not just basic operation?

- What line material and filter arrangement are recommended for the target media?

- How should calibration be matched to actual sampling conditions?

- What routine maintenance interval is typical under clean, moderate, or heavy-duty service?

- Can the supplier support commissioning guidance, SOP advice, or troubleshooting for sample handling?

These questions are especially relevant for engineering teams, quality departments, and procurement groups comparing multiple vendors in the instrumentation market.

FAQ and Practical Guidance for Daily Operation

Even after a suitable laboratory sensor is selected, repeatability still depends on daily discipline. The following questions reflect common search intent from operators, technical reviewers, and buyers who need practical guidance rather than generic performance claims.

How can operators quickly tell whether repeatability problems come from sampling or the sensor?

A simple field check is to run repeated measurements using a stable reference gas or standard sample while keeping the same sensor in place. If repeatability improves under controlled reference conditions but worsens with the process sample, the problem usually lies in sampling, conditioning, or sample source stability. Running 5 consecutive tests with controlled flow and temperature often reveals the pattern within 15 to 30 minutes.

What is a reasonable purge and stabilization practice?

A good starting point is 3 to 5 line volume exchanges, followed by a stabilization hold period long enough to confirm the displayed value is no longer trending. In many routine gas analysis tasks, this means 30 to 120 seconds. For reactive gases, long tubing, or moisture-sensitive infrared analysis, a longer interval may be necessary. The correct value should be validated once and documented in the procedure.

When should a sampling system be upgraded instead of maintained?

Upgrade the sampling system when maintenance becomes frequent, stabilization is consistently slow, or repeatability remains outside acceptable limits after routine service. If filters clog too often, condensation persists, or line memory effects cannot be controlled, redesign usually gives a better return than repeating the same repairs. Many facilities review this threshold over a 6 to 12 month maintenance history.

What should distributors and service teams emphasize during customer support?

They should ask for the full sampling picture: line length, media type, ambient temperature, flow setting, conditioning steps, purge time, and calibration routine. Support that focuses only on the detector can miss the true cause and prolong complaint resolution. A standard troubleshooting form with 8 to 10 required data points usually improves first-response accuracy.

Laboratory sensor repeatability problems often start with sampling because the detector can only measure what the sample path delivers. For instrumentation users across manufacturing, energy, environmental monitoring, laboratory analysis, and automation control, better flow management, temperature control, material selection, purge discipline, and purchasing review can significantly improve measurement stability. If you are evaluating a new analyzer, troubleshooting unstable results, or planning a more reliable sampling configuration, contact us to discuss your application, get a tailored solution, and learn more about practical measurement and monitoring options.

Recommended for You

Search Categories

Search Categories

Latest Article

- What Makes a Control Sensor Reliable in Harsh Environments?Control sensor reliability in harsh environments starts with the right oxygen detector, infrared detector, electrochemical detector, or paramagnetic detector for accurate, dependable monitoring.Posted by:

- Control Sensor Delays Can Undermine Process StabilityControl sensor delays can destabilize operations. Compare paramagnetic detector, electrochemical detector, infrared detector, and oxygen detector options to find a high accuracy sensor for fixed, portable, laboratory, and monitoring sensor needs.Posted by:

- Laboratory Sensor Repeatability Problems Often Start With SamplingLaboratory sensor repeatability issues often begin with sampling. Compare paramagnetic detector, electrochemical detector, infrared detector, and oxygen detector solutions to improve accuracy.Posted by:

Please give us a message