Thermal Analysis Data Can Mislead When Sample Prep Is Overlooked

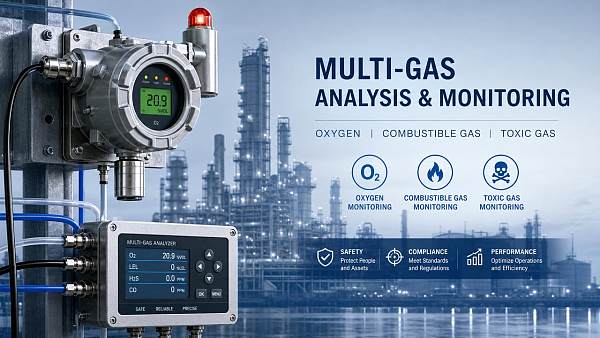

Thermal analysis is often treated as an objective source of truth, but in practice, poor sample preparation can distort results enough to trigger wrong technical decisions, quality failures, unnecessary troubleshooting, or even safety risk. For teams using thermal analysis alongside laser analysis, paramagnetic measurement, portable monitoring, continuous monitoring, industrial gas monitoring, or a fixed analyzer inside an analyzer enclosure, the message is simple: if sample prep is inconsistent, the data may look precise while still being misleading. The most useful way to manage this risk is to identify where preparation changes the material, standardize handling, and verify whether the result reflects the sample itself or the way it was prepared.

Why sample preparation is often the hidden reason thermal analysis data goes wrong

Most readers searching this topic are not asking whether thermal analysis is valuable. They already know it is. What they need to understand is why credible-looking data can still produce the wrong conclusion. In many cases, the root cause is not instrument failure but uncontrolled sample preparation.

Thermal analysis methods such as TGA, DSC, DTA, and related material characterization techniques are highly sensitive to sample condition. Small differences in particle size, moisture exposure, sample mass, packing density, surface contamination, oxidation before testing, or container selection can change heat flow, decomposition profile, transition temperature, and reaction onset. This means two operators can test the “same” material and obtain different answers if the sample was prepared differently.

For quality teams, this creates false alarms or missed defects. For technical evaluators, it can undermine material comparison and method validation. For project managers and decision-makers, it creates business risk because procurement, process adjustment, safety review, or customer acceptance may be based on distorted evidence. For distributors and end users, it can lead to confusion about product performance or inconsistent field claims.

What target readers usually care about most

The central concern is not theory. It is decision reliability. Different audiences ask this in different ways:

- Operators and users: Why did the result change from one run to another, and what should be controlled before testing?

- Technical evaluators: Is the observed transition, mass loss, or thermal event a true material property or a preparation artifact?

- Business decision-makers: Can this data be trusted enough for supplier approval, process change, product release, or risk control?

- Quality and safety personnel: Could preparation error hide instability, contamination, moisture sensitivity, or dangerous decomposition behavior?

- Project leaders: How do we reduce retesting, delays, and disagreement across teams or sites?

- Channel partners and distributors: How do we explain performance variation without damaging confidence in the product or the instrument?

Because of these concerns, the most valuable content is practical: where errors come from, how to detect them, how to standardize preparation, and how to know whether results are ready for action.

Common sample preparation mistakes that create misleading thermal analysis results

Several preparation issues repeatedly affect data quality across industries.

- Non-uniform sample mass: Too much or too little material can shift peak shapes, slow heat transfer, or exaggerate small events.

- Inconsistent particle size: Grinding, sieving, or not homogenizing a sample can change reaction rate and thermal response.

- Moisture gain or loss before testing: Hygroscopic materials can absorb water from air quickly, while volatile components can evaporate during handling.

- Contamination during transfer: Residues from tools, containers, gloves, or the environment may introduce false signals.

- Improper pan or crucible selection: Open, sealed, inert, or reactive containers can produce very different outcomes.

- Uneven packing or contact: Sample geometry affects thermal conductivity and reproducibility.

- Oxidation before analysis: Air-sensitive materials may change before the run even begins.

- Uncontrolled storage time: A sample tested immediately may not behave like one left on a bench for hours or days.

These problems are especially important when thermal analysis is part of a larger measurement chain that also includes laser analysis, paramagnetic measurement, industrial gas monitoring, or custom measurement workflows. If upstream handling is inconsistent, downstream comparison becomes unreliable.

How misleading data affects operations, quality, and business decisions

Misleading thermal analysis data does not stay in the lab. It influences real-world outcomes.

In manufacturing, a false indication of instability may trigger unnecessary process changes, batch rejection, or supplier disputes. In quality control, a missed thermal event can allow nonconforming or degraded material to pass inspection. In safety management, underestimating decomposition behavior may expose teams to storage, transport, or processing hazards. In engineering projects, inconsistent results can delay commissioning, validation, or root-cause investigations.

There is also a cost dimension. Repeated testing, expert review, delayed release, and unnecessary troubleshooting consume time and budget. For organizations investing in portable monitoring, continuous monitoring, or fixed analyzer systems in analyzer enclosures, data credibility is part of the return on investment. High-performance instrumentation cannot compensate for poor sample discipline. Accurate decisions depend on both measurement technology and controlled preparation.

How to tell whether a thermal analysis result reflects the material or the prep method

A useful judgment framework is to ask four questions:

- Was the sample handling documented well enough to repeat exactly? If not, reproducibility is already weak.

- Do replicate runs agree within a reasonable range? Large variation often points to prep inconsistency rather than true material behavior.

- Did the result change after altering preparation conditions? If small prep changes create large analytical shifts, the method may be prep-sensitive.

- Does the result align with other material knowledge? Compare with process history, composition data, supplier specs, and complementary measurements.

Cross-checking is especially valuable. If thermal analysis suggests an event that is not supported by related observations from laser analysis, paramagnetic measurement, industrial gas monitoring, or process data, the team should investigate preparation and method design before making a major decision.

Practical ways to reduce sample prep error and improve confidence

The strongest improvement usually comes from standardization, not complexity. Teams can reduce risk by implementing a small number of disciplined practices:

- Define a written sample preparation SOP for each material family.

- Specify sample mass range, particle size treatment, storage condition, and allowable handling time.

- Control humidity, temperature, and exposure to air where relevant.

- Use clean, compatible tools and containers to avoid contamination.

- Standardize pan or crucible type and loading method.

- Run replicates for sensitive or high-impact decisions.

- Train operators to recognize when appearance, texture, or handling behavior suggests the sample has changed.

- Document deviations so reviewers can judge whether a result is decision-ready.

For organizations operating across multiple sites, this matters even more. A shared method with defined preparation controls can reduce disagreement between labs, improve supplier comparisons, and support more consistent custom measurement results.

When advanced instrumentation still needs stronger front-end discipline

Many organizations invest in better analyzers to solve inconsistent data, but the problem often starts before the sample enters the instrument. This is true across the instrumentation industry, where analytical performance depends on the full measurement workflow.

Whether the application involves laboratory thermal analysis, field-deployed portable monitoring, continuous monitoring systems, or integrated analyzer enclosure solutions, front-end discipline is what makes data trustworthy. Advanced equipment improves sensitivity and control, but it does not remove sample bias introduced by poor collection, storage, transfer, or preparation.

For managers evaluating instruments or methods, this is an important point: equipment capability should be assessed together with sample handling requirements, operator training, and workflow robustness. Otherwise, the organization may overestimate the value of the instrument and underestimate the real source of error.

Conclusion: accurate thermal analysis starts before the test begins

Thermal analysis can reveal critical information about stability, composition, transitions, and decomposition, but the result is only as reliable as the sample preparation behind it. When preparation is overlooked, even sophisticated thermal analysis, laser analysis, paramagnetic measurement, portable monitoring, continuous monitoring, and industrial gas monitoring workflows can support the wrong conclusion with apparently precise data.

The clearest takeaway is that sample preparation is not a minor lab detail. It is a decision-quality issue. Teams that standardize handling, document preparation variables, and verify results against complementary evidence are far more likely to generate accurate, safe, and actionable measurement outcomes. If the goal is trustworthy analysis, the right place to start is not only the instrument but the sample itself.

Recommended for You

Search Categories

Search Categories

Latest Article

- SAMR to Finalize 1,800+ Standards by 2026, Including EV Battery RecyclingSAMR to finalize 1,800+ standards by 2026—including EV battery recycling, hazardous chemical packaging & EMC immunity. Stay ahead of mandatory GB shifts and global export compliance.Posted by:

- China's Largest Single-Line Large-Tow Carbon Fiber Line Starts OperationChina's largest single-line large-tow carbon fiber line is live—30,000 tons/year capacity, ASME-certified, 22% cheaper & 6-week lead time. Boost your industrial instrumentation supply chain now.Posted by:

- China Mobile Unveils National Integrated Computing Power NetworkChina Mobile's National Integrated Computing Power Network accelerates AI quality inspection for global manufacturers—cutting model training time by 68% and enabling cross-border industrial AI development.Posted by:

Please give us a message