Why a High Accuracy Sensor May Still Miss Critical Changes

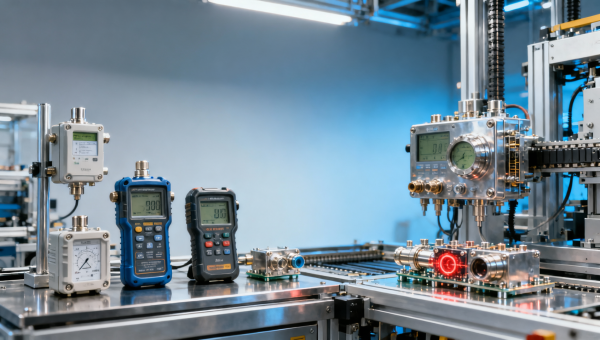

A high accuracy sensor can still fail to capture critical changes when response speed, operating conditions, and application fit are overlooked. Whether you rely on a paramagnetic detector, electrochemical detector, infrared detector, oxygen detector, fixed sensor, portable sensor, laboratory sensor, control sensor, or monitoring sensor, true performance depends on more than precision alone. Understanding these limits helps buyers, engineers, and safety teams make smarter decisions.

Why accuracy alone does not guarantee reliable change detection

In the instrumentation industry, accuracy is often the first parameter people compare. It is easy to understand why. A specification such as ±1% of reading or ±0.1% full scale appears objective, clean, and comparable. Yet in industrial manufacturing, energy systems, laboratory analysis, environmental monitoring, and safety control, critical events often happen faster or under harsher conditions than a sensor’s accuracy number suggests.

A sensor may be highly accurate in a stable calibration environment of 20°C–25°C, low vibration, and controlled humidity, but still miss a dangerous concentration spike, a pressure pulse, or a temperature swing in the field. This gap matters to operators, technical evaluators, procurement teams, quality managers, and project leaders because missed changes create risk far beyond measurement error. They can affect process control, product quality, maintenance timing, and site safety.

For example, an oxygen detector with excellent static accuracy may not respond quickly enough to a rapid gas displacement event in a confined space. A laboratory sensor may produce highly precise results in batch testing, but a monitoring sensor installed for continuous duty may drift when exposed to dust, solvent vapors, or temperature cycling over 8–24 hours of operation. In these cases, the issue is not whether the sensor is “good” or “bad.” The issue is application fit.

For B2B buyers, the practical question is not simply “How accurate is the sensor?” It is “How accurately does the sensor represent the real process under my conditions, at the speed my process changes, across the maintenance cycle I can actually support?” That is a broader and more useful evaluation standard.

The four performance factors that often matter more than headline accuracy

When measurement performance is reviewed in real projects, four factors repeatedly determine whether a high accuracy sensor performs well or fails to capture critical changes. These factors should be evaluated together during early selection, pilot validation, and final approval.

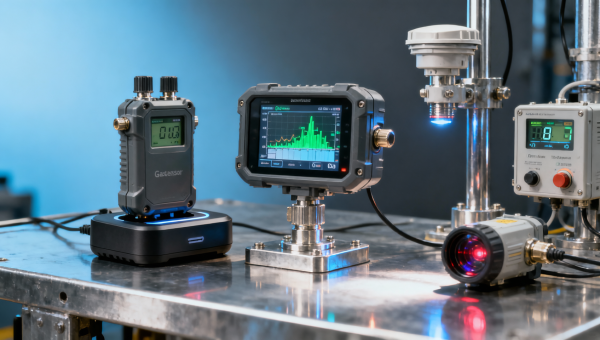

- Response time: In many gas, flow, and thermal applications, a T90 response time of a few seconds versus tens of seconds can change the usefulness of the reading. Fast process changes demand fast sensor behavior.

- Environmental tolerance: Temperature, humidity, pressure fluctuation, vibration, and contamination can alter signal stability even when nominal accuracy remains attractive on paper.

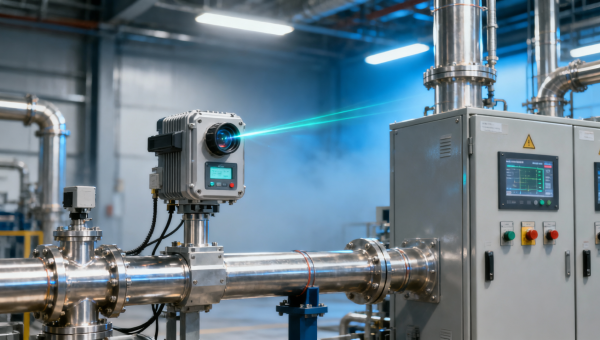

- Installation and sampling path: A detector mounted too far from the event point, or connected through a slow sample line, may detect the change too late for control or alarm action.

- Maintenance and calibration interval: A sensor that is precise on day 1 but drifts after 30–90 days without proper service may not support long-term operational reliability.

This broader view is especially important in modern automation and digital transformation projects. As plants demand tighter control loops, more continuous monitoring, and more integrated safety systems, the cost of slow or misleading detection grows. Instrumentation must support not only measurement, but decision-making in real time.

Which technical limits cause high accuracy sensors to miss critical changes?

A high accuracy sensor can miss meaningful process shifts for several technical reasons. Some are rooted in sensor physics. Others come from system integration. In practice, these limitations are most visible in oxygen analysis, gas detection, process control loops, and online monitoring where dynamic conditions matter more than static calibration results.

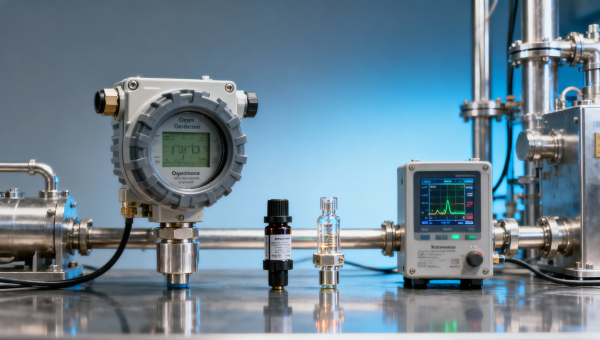

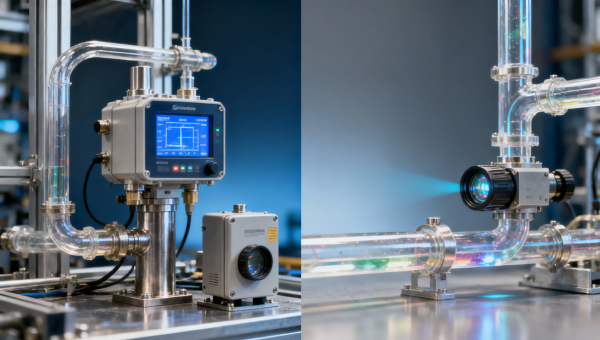

Different detector technologies behave differently. A paramagnetic detector may offer strong oxygen measurement performance in suitable gas analysis conditions, while an electrochemical detector may be preferred for portable use or lower-concentration monitoring depending on operating duration and service expectations. Infrared detector technology works well for selected gases but is influenced by optical path conditions and target gas compatibility. No single technology is universally superior.

The table below summarizes the most common reasons a high accuracy sensor may still fail to capture critical changes across industrial and analytical settings. It is intended as a practical screening tool during technical evaluation and procurement review.

For technical teams, this table shifts the conversation from isolated specification review to system performance review. That change is valuable because many failures originate between the sensing element and the process environment, not inside the sensor datasheet itself.

Dynamic measurement is different from static measurement

A static test asks whether the reading is close to a reference point. A dynamic test asks whether the sensor can follow a change as it happens. In many control and safety applications, dynamic performance is more critical. A detector that reaches the correct value after 45 seconds may still be ineffective if the process upset lasts only 10–15 seconds.

This distinction is often overlooked during procurement because accuracy is easier to compare than dynamic behavior. Financial approvers may favor a lower-cost option with attractive stated precision, while operations and safety teams care more about time-to-detection, repeatability under load, and maintenance burden over 6–12 months of use.

Project managers should therefore ask suppliers for application-oriented validation: expected response profile, warm-up period, recommended calibration frequency, allowable ambient range, and known interference conditions. These points often reveal more decision value than one line in a specification sheet.

Common hidden causes in integrated systems

- Signal filtering in the transmitter or PLC slows recognition of a fast process event.

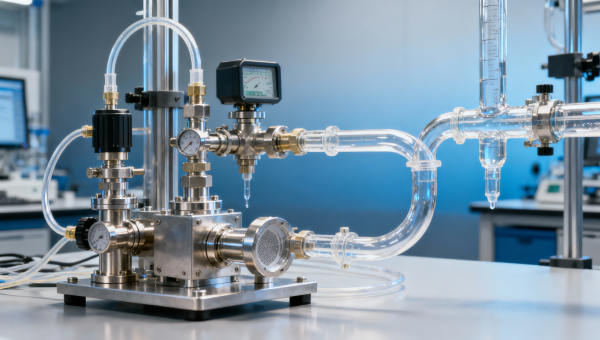

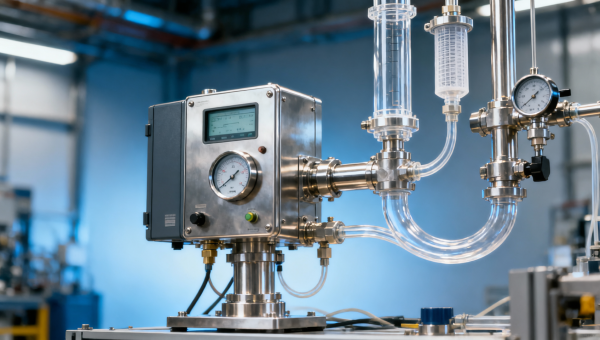

- Sampling pumps, tubing length, and dead volume delay gas arrival by several seconds or more.

- Improper range selection causes a small but important variation to disappear within a broad measuring span.

- Calibration gas or reference condition does not match the actual process matrix, reducing field relevance.

In other words, if a sensor misses a critical change, the root cause may be the entire measurement chain rather than the sensing element alone. That is why integrated instrumentation engineering matters in digital plants and intelligent monitoring systems.

How should different users evaluate sensor fit by application scenario?

Sensor selection should change with the application. A portable sensor for field safety checks, a fixed sensor for continuous plant monitoring, a laboratory sensor for analytical validation, and a control sensor used inside an automated process loop do not face the same priorities. Accuracy remains important, but its weight changes when response, durability, service interval, and integration needs are different.

For example, an operator performing spot checks may value fast start-up and clear alarms within a 1–5 minute inspection workflow. A quality manager in laboratory analysis may prioritize repeatability, calibration traceability, and environmental stability. A project owner commissioning an online monitoring point may focus on communication output, enclosure suitability, and maintenance access during 24/7 operation.

The table below helps compare typical sensor priorities by scenario. It can be used by distributors, procurement teams, and engineering reviewers to align specification review with actual operating needs rather than generic assumptions.

The key message is simple: the best sensor is not the one with the most impressive isolated number. It is the one whose performance profile matches the task, environment, and maintenance reality of the site.

A practical evaluation checklist for multi-role buying teams

In many instrumentation purchases, final approval involves at least 4 roles: user or operator, technical evaluator, purchaser, and approver. For larger projects, quality, safety, and project management also participate. A shared checklist reduces disagreement and prevents selection based on only one viewpoint.

- Define the event that must be detected: Is it a slow drift over hours, or a fast upset within seconds?

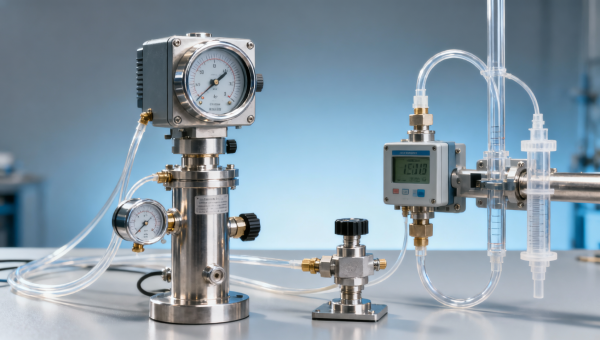

- Map the environment: List temperature range, humidity, vibration, contaminants, installation height, and access limitations.

- Confirm lifecycle requirements: Decide whether calibration is expected weekly, monthly, quarterly, or at another service interval.

- Review integration needs: Check analog output, digital protocol, alarm interface, and enclosure suitability before purchase order release.

- Validate total operational cost: Include spare parts, calibration gases, labor hours, and downtime risk, not just unit price.

This process is especially useful for distributors and resellers who must balance technical suitability with stock strategy, delivery timing, and after-sales service expectations across different customer sectors.

What should procurement and finance teams compare before approving a sensor?

Procurement decisions in the instrumentation sector often become difficult when technical specifications are dense and project timelines are short. Buyers may receive several offers that all claim high precision, but differ in response behavior, calibration needs, operating life, and service support. Finance teams then see price gaps without a clear explanation of value. A structured comparison reduces both technical and commercial risk.

The most useful approach is to compare sensor solutions across 3 layers: acquisition cost, operating cost over 12–24 months, and risk cost if a critical change is missed. This last layer is often ignored, yet in safety, product quality, or process stability applications, it can be the most expensive layer of all.

Before approval, teams should request not only price and nominal accuracy, but also delivery lead time, recommended spare parts, calibration frequency, environmental limitations, communication options, and application notes. In many projects, a 7–15 day supply difference or an added maintenance cycle every month can influence final project economics more than the initial purchase price.

Core comparison points for commercial and technical approval

- Detection reliability: Can the sensor identify the event at the required speed and concentration or range threshold?

- Service burden: How many maintenance actions are expected per quarter, and what consumables are needed?

- Integration cost: Will extra converters, mounting hardware, sampling accessories, or software configuration be required?

- Downtime impact: If recalibration or replacement is needed, how long will the point be unavailable?

A simple total-cost decision model

Teams do not need a complex financial model to make a better decision. Even a simple 4-part review can reveal hidden cost drivers and support cross-functional approval.

This comparison method helps finance teams understand why a moderate price premium may be justified when the sensor reduces maintenance frequency, improves event capture, or shortens commissioning by 1–2 weeks.

For procurement professionals, the goal is not to buy the highest specification on paper. It is to buy the lowest-risk solution that fits budget, installation schedule, and operating model. That is a stronger purchasing outcome.

What are the most common misconceptions, and how can teams avoid them?

Many sensor projects fail not because the technology is weak, but because common assumptions go unchallenged. These misconceptions appear in both new installations and replacement projects, especially when users switch from one detection principle to another without reviewing process differences.

The first misconception is that higher accuracy automatically means better safety or better control. In reality, if the event is transient, the sensor must be fast enough, correctly positioned, and resistant to interference. The second misconception is that one detector type can cover all scenarios. Portable and fixed applications, for instance, often require different design priorities even when they measure the same gas.

A third misconception is that calibration solves everything. Calibration is essential, but it does not remove installation delays, cross-sensitivity, clogged filters, long sample paths, or poor process matching. Teams should treat calibration as one part of performance assurance, not the entire solution.

FAQ for buyers, engineers, and safety teams

How do I know whether response time matters more than accuracy?

Ask how quickly the process can change and how quickly a decision must be made. If a leak, purge event, combustion upset, or oxygen drop can develop within seconds, response time may be more important than marginal improvements in static accuracy. In contrast, slow laboratory equilibrium measurements may place greater weight on repeatability and calibration stability.

Are fixed sensors always better than portable sensors?

No. Fixed sensors are better for continuous coverage and integration into alarms or control systems, especially in 24/7 operations. Portable sensors are better for flexible spot checks, entry testing, troubleshooting, and mobile safety routines. Many facilities need both, not one or the other.

What should technical evaluators request from suppliers during selection?

Request application-relevant information such as response time, operating temperature range, maintenance interval, known interference gases, installation orientation guidance, calibration method, and output options. If possible, ask for a scenario-based review covering the expected concentration range, environmental conditions, and duty cycle over a normal month or quarter.

How long is a typical delivery and implementation cycle?

This depends on configuration complexity, documentation needs, and whether sampling assemblies or communication accessories are included. Standard items may move faster, while integrated packages often require additional time for accessory confirmation, signal planning, and commissioning support. Teams should confirm delivery, installation preparation, and site acceptance as separate milestones.

A disciplined review of these questions can prevent expensive rework. In practice, 3 early checks usually catch most issues: confirm application conditions, verify dynamic performance, and review service requirements over the planned operating period.

Why work with a partner that understands instrumentation beyond the datasheet?

In instrumentation, the best result comes from aligning sensor technology, process conditions, installation method, and maintenance plan from the start. That requires more than quoting a model number. It requires understanding measurement objectives across industrial manufacturing, energy and power, environmental monitoring, medical testing, laboratory analysis, construction engineering, and automation control.

A capable instrumentation partner helps you compare paramagnetic detector, electrochemical detector, infrared detector, oxygen detector, fixed sensor, portable sensor, laboratory sensor, control sensor, and monitoring sensor options against actual operating conditions. That support is valuable when you need to balance 5 key factors at once: performance, integration, compliance expectations, delivery timing, and lifecycle cost.

If you are evaluating a new project or replacing an underperforming sensor, you can contact us for practical support on parameter confirmation, product selection, detector technology comparison, operating condition review, sample path planning, communication interface matching, delivery lead time, sample availability, and quotation discussion. We can also help structure a 3-step review covering application analysis, configuration recommendation, and implementation planning.

This approach helps information researchers gather useful technical input, supports operators with workable field solutions, gives procurement clearer comparison logic, and gives project leaders a smoother path from specification to deployment. When critical changes matter, the right sensor decision starts with the right evaluation framework.

Recommended for You

Search Categories

Search Categories

Latest Article

- How to Choose an Oxygen Sensor That LastsOxygen sensor selection made practical: learn how to choose a durable sensor for oxygen analysis, combustion analysis, environmental analysis, safety analysis, and air monitoring.Posted by:

- Monitoring Sensor Prices in 2026: What Is Changing and WhyMonitoring sensor prices in 2026: compare portable sensor, fixed sensor, oxygen detector, infrared detector, electrochemical detector and paramagnetic detector options to choose a high accuracy sensor with better lifecycle value.Posted by:

- Monitoring Sensor Data Looks Stable Until These Errors Show UpMonitoring sensor errors can hide behind stable data. Compare paramagnetic detector, electrochemical detector, infrared detector, and oxygen detector options for safer, high accuracy sensor selection.Posted by:

Please give us a message