Common Calibration Mistakes That Affect C9H18O Concentration Analyzer Performance

Accurate calibration plays a decisive role in ensuring the reliable operation of a C9H18O concentration analyzer, yet common mistakes during this process can lead to significant measurement deviations and system inefficiencies. Whether you’re managing a C10H20O concentration analyzer, C8H16O concentration analyzer, or other related instruments such as C5H10O and CH3OH concentration analyzers, understanding these recurring calibration pitfalls is essential. This article explores how to identify, prevent, and correct such errors to improve analytical accuracy and prolong equipment lifespan.

Understanding Calibration Fundamentals and Industry Context

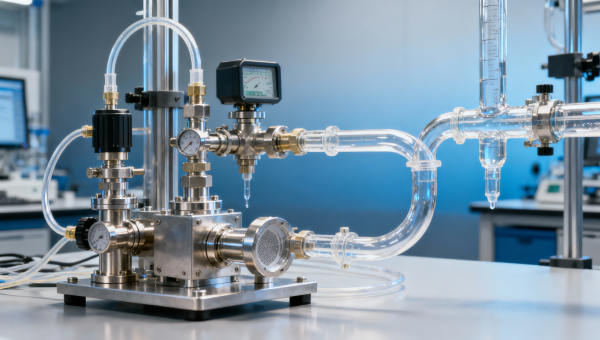

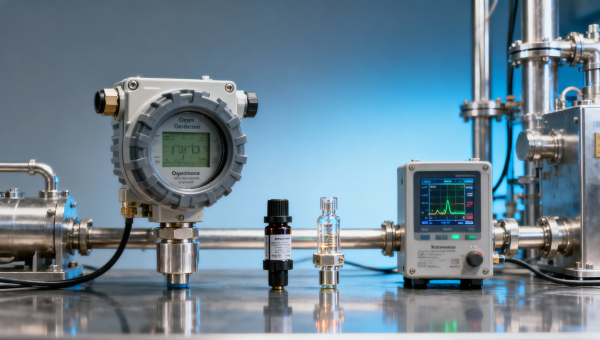

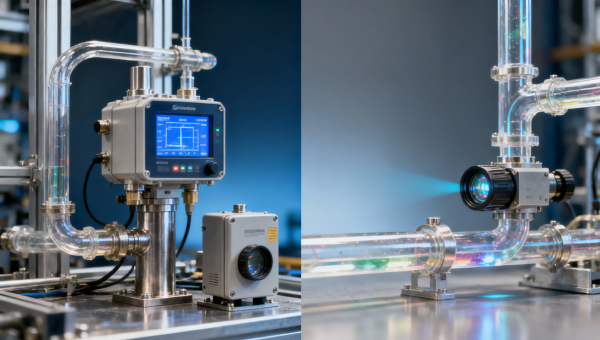

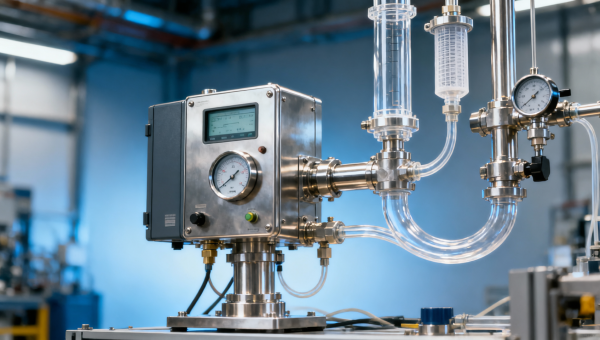

In the instrumentation industry, calibration ensures that an analyzer’s output aligns with verified standards under controlled conditions. For C9H18O concentration analyzers, the accuracy margin is often defined within ±1.0% of the target value, depending on the calibration range from 0.1 ppm to 100 ppm. Regular calibration every 3–6 months is typical across industrial and laboratory setups, ensuring long-term data consistency.

The calibration process generally involves reference gas verification, sensor drift compensation, and environmental condition monitoring. Temperature (10°C–35°C) and humidity (40%–70% RH) can significantly affect analyzer stability if not properly controlled. Operators must be aware that even a 2°C deviation can cause noticeable measurement bias when working with volatile organic compounds like C9H18O.

The instrumentation sector provides critical measurement and monitoring tools to industries such as energy, manufacturing, environmental safety, and medical analysis. In all these areas, miscalibration not only impacts accuracy but may lead to regulatory compliance issues under standards like ISO/IEC 17025 and ASTM D5466, which emphasize repeatability and traceability of calibration results.

From a business perspective, the cost of poor calibration can be substantial. A 5% measurement deviation over three months may result in material wastage or product rejections exceeding thousands of dollars in high-throughput production environments. Therefore, understanding the fundamentals is critical for both technical operators and financial planners.

Top Calibration Mistakes Affecting C9H18O Analyzer Accuracy

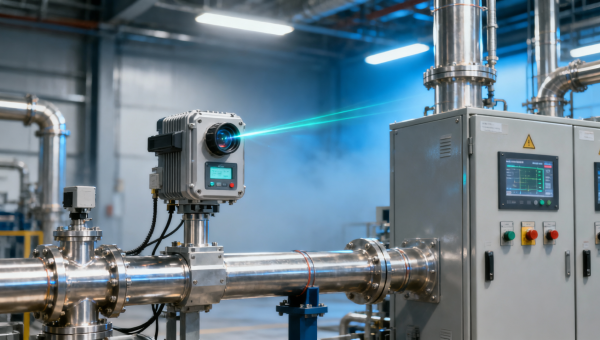

Operational errors during calibration represent more than 60% of analyzer performance issues reported in field inspections. Identifying these key mistakes improves accuracy and prevents premature equipment degradation. The common failures typically arise in environmental control, reference material management, and calibration interval design.

Five typical calibration errors include:

- Using expired or unverified calibration gases, often leading to ±2 ppm drift in mid-range concentration readings.

- Ignoring ambient temperature stabilization; sudden 5°C–10°C shifts distort detection sensitivity.

- Skipping zero-point calibration or relying on single-point verification, violating multi-point calibration standards.

- Failing to log calibration history, reducing traceability and limiting internal audit consistency.

- Overlooking sensor aging effects when exceeding 10,000 hours of operation without recalibration scheduling.

The table below summarizes typical mistakes and their consequences across different operation cycles.

By adopting a structured calibration plan that follows these parameters, calibration stability can be improved by at least 25% compared to irregular manual setups. The cumulative effect significantly boosts analyzer performance and prolongs service intervals.

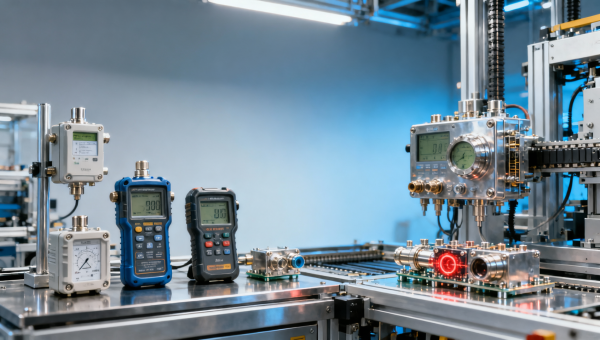

Calibration Environment and Interference Management

Environmental conditions directly influence calibration reliability. In industrial labs, parameters like temperature, humidity, and surrounding gas contamination can generate signal noise up to 0.3 ppm on sensitive analyzers. To minimize errors, maintain environmental baselines within the tolerance band specified by manufacturer standards.

Calibration rooms should be isolated from vibration sources exceeding 0.5 g acceleration, and air exchange rates maintained between 8–12 cycles per hour to ensure stable gas concentration equilibrium. It is also advisable to use clean nitrogen purging for instruments handling volatile compounds like C9H18O, particularly when cross-sensitivity analysis is required.

Operators often overlook the influence of electrical interference. Ground loop currents under 2 mA can still create up to 0.2% output deviation. Shielded cables and stable grounding resistance below 5 Ω are recommended for high-precision laboratory setups.

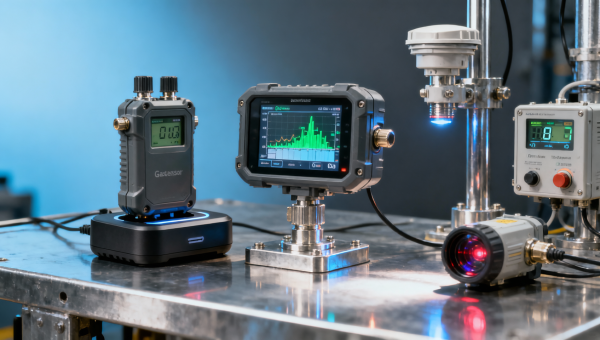

Continuous monitoring of environmental parameters also supports predictive maintenance planning. Through smart IoT integration, calibration intervals can be data-driven—extending up to 9 months in stable conditions or shortened to 2 months in variable production environments.

Procurement and Maintenance Guide for Analyzer Calibration Systems

For decision-makers and technical buyers, selecting the right calibration system depends on both operational requirements and compliance targets. Industrial projects typically involve 3 key evaluation stages: technical specification review, cost-benefit comparison, and lifecycle service validation. Each stage influences total calibration sustainability over 2–5 years of equipment usage.

Below is a simplified comparison illustrating core procurement considerations for calibration-related instruments in industrial environments.

When planning procurement, enterprises should also examine after-sales calibration support availability, calibration traceability certificates, and environmental compliance documentation. A complete lifecycle approach can reduce calibration-related downtime by 15–20% while ensuring analyzer performance consistency across operating cycles.

Frequently Asked Questions About Calibration Practices

How often should a C9H18O analyzer be recalibrated?

Under stable environmental and operational conditions, recalibration is recommended every 3–6 months. However, in high-humidity or variable temperature environments, monthly calibration cycles are advisable for maintaining accuracy within ±1 ppm.

What standards should calibration follow?

ISO/IEC 17025 calibration laboratory protocols and ASTM D5466 analytical methods are widely recognized. Following these ensures traceability and consistency between international labs, supporting quality audits and regulatory approvals.

Can digital calibration automation reduce human error?

Yes. Automated calibration modules using PID-based feedback can lower deviation from manual steps by 20–30%. Automation also improves reproducibility across multiple analyzers and reduces technician workload during repetitive calibration tasks.

What are key signs that recalibration is urgently required?

Unstable baseline readings, signal drift exceeding ±0.5 ppm within 24 hours, and unexplained alarm triggering are typical indicators. In such cases, the instrument should be recalibrated or inspected immediately before process continuation.

Why Choose Us for Calibration Consulting and Support

In the complex landscape of instrumentation and analysis, our calibration consulting services deliver structured, data-driven strategies for maintaining C9H18O analyzer precision. We assist enterprises in defining calibration intervals, selecting reference materials, and implementing ISO-compatible record systems. Our experience spans over multiple industrial sectors, from petrochemical labs to environmental monitoring stations.

Clients typically engage us for the following purposes:

- Customized calibration plans aligned to production schedules (3–12 month intervals)

- Selection of certified reference materials for C9H18O, C10H20O, and related analyzers

- Consultation on reducing calibration costs while maintaining compliance

- Comparative evaluation between manual and automated calibration systems

- Technical training for operators to reduce recurring calibration errors

If you’re evaluating analyzer procurement or seeking advanced calibration management solutions, contact our technical team to discuss parameter confirmation, custom calibration kits, expected delivery schedules (standard 2–4 weeks), certification support, or cost optimization plans. We emphasize measurable outcomes—higher data reliability, reduced maintenance cycles, and sustainable operational performance across industrial instrumentation applications.

Recommended for You

Search Categories

Search Categories

Latest Article

- Monitoring Sensor Prices in 2026: What Is Changing and WhyMonitoring sensor prices in 2026: compare portable sensor, fixed sensor, oxygen detector, infrared detector, electrochemical detector and paramagnetic detector options to choose a high accuracy sensor with better lifecycle value.Posted by:

- Monitoring Sensor Data Looks Stable Until These Errors Show UpMonitoring sensor errors can hide behind stable data. Compare paramagnetic detector, electrochemical detector, infrared detector, and oxygen detector options for safer, high accuracy sensor selection.Posted by:

- What Makes a Control Sensor Reliable in Harsh Environments?Control sensor reliability in harsh environments starts with the right oxygen detector, infrared detector, electrochemical detector, or paramagnetic detector for accurate, dependable monitoring.Posted by:

Please give us a message